Is "Data Driven" Just Another Buzzword?

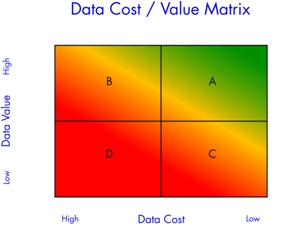

"Data driven" seems to mean anything the speaker wants it to mean. There is no standardized definition of "data driven," nor is there a standard measurement for how "data driven" an organization is. That seems rather odd to me. If you're going to strive to become "data driven," shouldn't we know where we're headed? Shouldn't we have a common operational definition of the term? As a starting point I offer the Data Cost / Data Value Matrix.

The Data Cost / Data Value Matrix is a simple two-by-two matrix that helps us talk about what it means to be "data driven." On the horizontal axis we have the cost of data, going from high to low. On the vertical axis we have the value of data, going from low to high. The matrix gives us four quadrants. The "data driven" organization lives in Quadrant A, in the upper-right corner. Data are inexpensive and of high value. In my experience very few businesses meet this definition. Some have pockets of excellence, but most fall short of realizing their full potential.

While there may be an infinite number of ways companies fall short of being "data driven," my experience is they fall into three broad categories, and they are represented by the other three quadrants on the matrix. I’ve seen all of these companies, and maybe you have too.

Companies in Quadrant B gain high value from their data, but pay way too much for the knowledge. From what I've seen, many Six Sigma companies fall into this bucket. For the most part these people understand how to maximize the value of the data. They know how to use the full array of statistical tools. They apply proven, disciplined techniques of project management and statistical problem solving to get to the bottom of chronic, entrenched problems. Payback from these programs can be huge.

However, many Six Sigma Belts spend an inordinate amount of time scrubbing and massaging data in order to get something useful. I like to call this effort the Six Sigma Data Shuffle.

Oddly this pattern is seen as normal in the Six Sigma world. If somebody in your organization has to copy files from one folder to another, reorganize the data in some new format (convert from .csv to .xls) and then scrub the data so that all descriptive fields match (make McConnell, F. and Frank McConnell into F. McConnell), all before you copy it into the final software program, where you have to group it into appropriate samples and manually enter specifications before you can begin to analyze the data, then you are paying too high a price for clean data.

Folks in Quadrant C seem to pursue data for data’s sake. They build elaborate data collection systems that effectively protect themselves from their customer. This data provides little or no additional value to the business. An example of this is the quality manager who once told me, "If the customer calls with a complaint, I’ll print out a blast of several thousand data points and email it to him. He gets real quiet when he discovers I have the data." The fact that these data are rarely used to make improvements to the process (and thereby eliminating the customer’s complaint) doesn’t seem to concern this manager. Data are cheap, but the only value comes from being able to "snow" the customer. Often this data is cheap because it is what CAN be collected, not what SHOULD be collected.

The third camp (Quadrant D) has the worst of both worlds: they pay a high price for data, but have almost nothing to show for their efforts. This is typical of mature organizations with a long tradition of inspecting quality into a product. Here it is 2009 and these businesses have enormous file cabinets full of hand written data sheets. Data are written on an inspection sheet and then filed away. Getting to the data is a laborious process requiring the patience of Job and the dogged determination of Wile E. Coyote.

In a future post I’ll share a quick test that I've developed so you can determine just how "data driven" your company is. Or you can download a white paper on the "Eight Aspects of the Data Driven Business - Exploring your Gap to Entitlement" here.

In the meantime, tell me what you think of this definition for "data driven." What's missing? What is on target? And while you're at it, what’s your best guess? Which quadrant does your business live in?

Copyright Hertzler Systems Inc., 2009. Used by permission.