How to Choose a Machine Vision Camera

Learn more about camera basics.

IP camera (left) and industrial camera (right).

Line-scan camera can check the quality of goods at speeds up to 60mph. Source: Basler

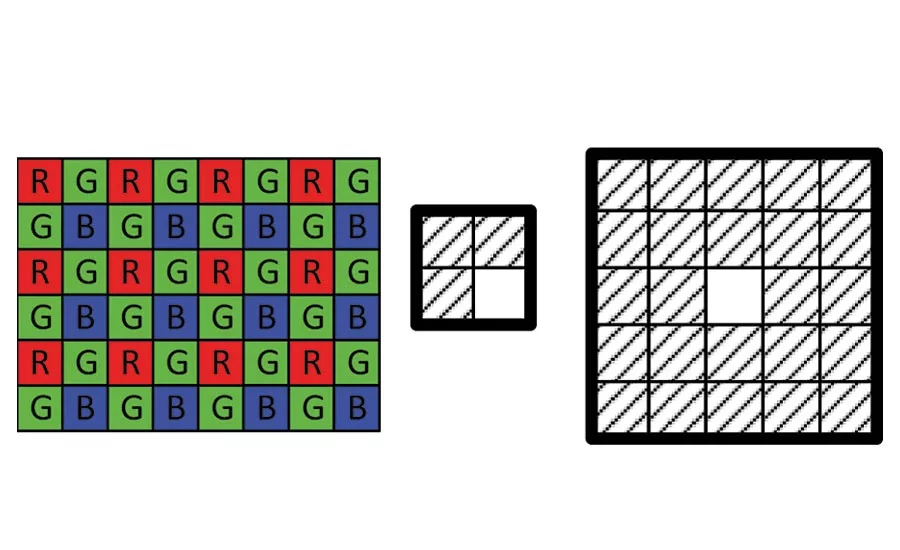

The Bayer matrix (left), 2×2 (middle) and 5×5 (right) debayering algorithms. Source: Basler

Typical examples of rolling shutter distortions. Source: Basler

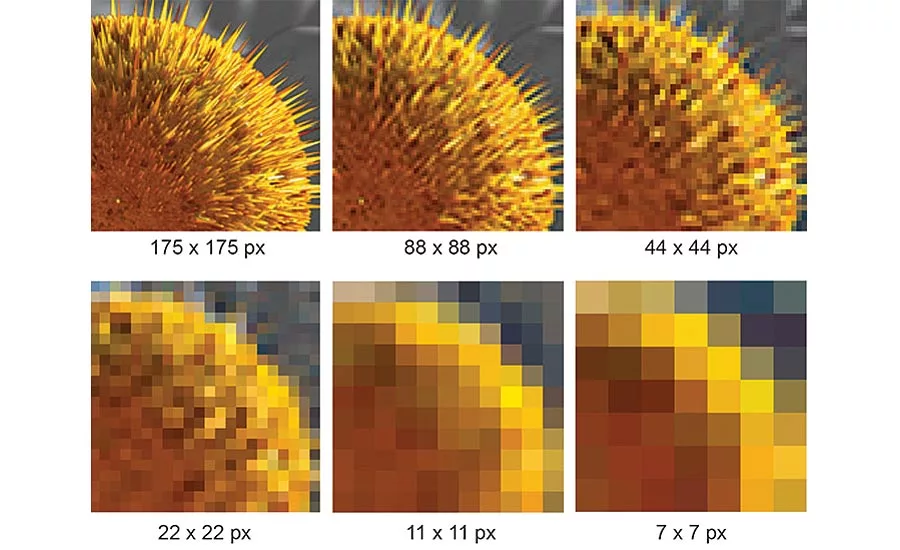

Examples of high and low resolutions. Source: Basler

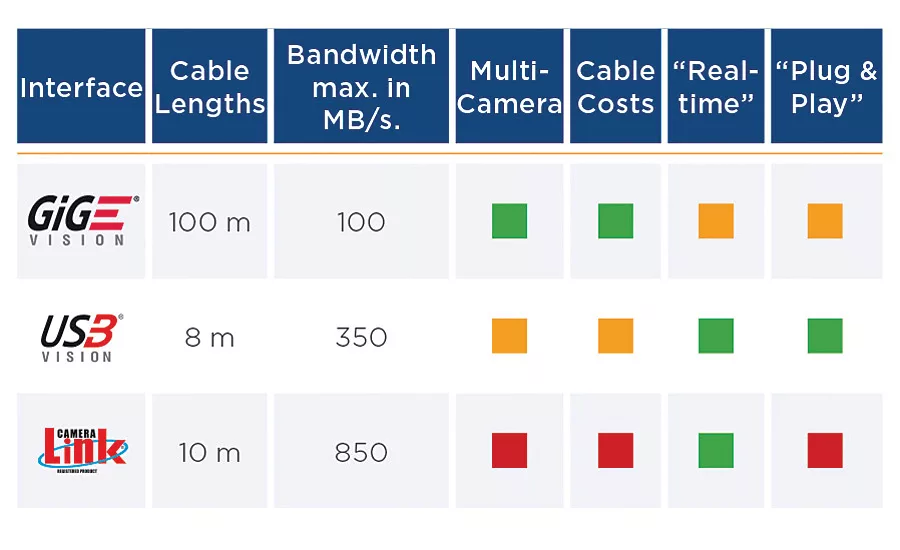

Interface comparison by cable length, bandwidth and other factors. Source: Basler

When you are setting up a machine vision system, your choice of camera will depend on the objects that you want to inspect, the necessary speed, lighting and temperature, and available space. And not to forget—the system costs.

Machine vision vs. Surveillance

For most applications in factory automation or the medical field you will need a machine vision camera. A machine vision camera captures image data and sends it uncompressed to the PC. This is the reason why pictures look less “pretty” than the ones from cell phones. In consumer cameras the image data gets compressed and smoothed out which looks good, but doesn’t provide the quality needed for flaw detection and code reading.

Network cameras or IP (Internet Protocol) cameras record video and compress it. Their advantage is their robustness and resistance to vibration and temperature spikes. They are also tolerant to poor lighting conditions and direct sunlight. IP cameras are mainly used in surveillance and in Intelligent Traffic Systems (ITS) applications, for example, for road tolling and red light detection.

Area scan vs. Line scan

If you have a high-speed application with a conveyor belt, you will need a line scan camera. These cameras use a single line of pixels (sometimes two or three lines) to capture the image data. They can check the printing quality of newspapers at a speed up to 60 mph, quickly sort letters and packages in logistics, inspect food for damages. They also control the quality of plastic films, steel, textiles, wafers and electronics.

If you need in-depth inspection, area scan cameras are your choice. They have a rectangular sensor consisting of several lines of pixels and capture the whole image at the same time. Area scan cameras are used in quality assurance systems, code reading, and for pick and place processes in robotics. They also get integrated in microscopes, dental scanners, and other medical devices.

Monochrome vs. Color

Monochrome cameras are mostly a better choice if the application does not require a color analysis. Because they don’t need a color filter, they are more sensitive than color cameras and deliver more detailed images.

Most of the color machine vision cameras use the Bayer matrix to capture color data. Each pixel has a color filter, half of them green and a quarter red and blue each. The debayering algorithm uses the information from adjoining pixels to determine the color of each pixel. So a 2×2 debayering reads the information from three adjoining pixels and 5×5 debayering reads the information from 24 adjoining pixels. So if you need a color camera, the bigger the debayering number, the better.

CMOS vs. CCD

In CMOS cameras, the electronics that convert the light into electronic signals are integrated into the surface of the sensor. This makes the transfer of the data especially quick. CMOS sensors are less expensive, don’t have blooming or smear and have a higher dynamic range. That allows them for example to capture both a high-lit license plate and the shadowed person in the car in one and the same image.

Because the CCD sensors do not have conversion electronics on the sensor’s surface, they can capture more light and so have a lower noise factor, high fill factor, and higher color fidelity. These properties make CCD cameras a good choice for low-light and low-speed applications like astronomy.

Global vs. Rolling Shutter

If you want to avoid distortions at high speeds and are not too concerned about price, then global shutter is the optimal choice. With the global shutter the entire sensor surface gets exposed to light at once. It’s great for high-speed applications, such as traffic and transportation, or logistics.

The rolling shutter reads the image line-by-line. The captured lines are then recomposed into one image. If the object is moving fast or the lighting conditions are bad, the image gets distorted. However, adjusting the exposure time and implementing flash, you can minimize the distortion. Rolling shutter is less expensive and is available only on CMOS based cameras.

Frame Rate

Frame rate is the number of images that the sensor can capture and transmit per second. The human brain detects approximately 14 to 16 images per second; the frame rate of a movie is usually 24 fps. For fast-moving applications like inspections of newspapers the camera needs to “shoot” in milliseconds. On the other end there are microscopic applications which require low frame rates comparable to the ones of the human eye.

Resolution

A simple formula is used to determine which resolution is required for your application: Resolution = (Object Size / Detail size)².

Let’s say you want to determine the eye color of a person who is two meters tall.

(2 m ÷ 1 mm)² = 4,000,000 pixels = 4 MP

An example of such a resolution would be 2048 px x 2048 px.

Interface

If your camera is far from the PC or if you need multiple cameras in one system, you’ll need GigE. It allows cable lengths up to 100 m and makes multi-camera integration easy. However, USB 3.0 gives you more than three times the bandwidth (350 MB/s versus 100 MB/s), is plug-and-play and has power and data via one cable. It can work with cable lengths only up to 8 m. If you need the highest possible bandwidth—up to 850 MB/s—then Camera Link is the best choice. Camera Link requires a frame grabber and so the system costs are higher.

Size

Today the most popular compact camera size is around 30 mm each side. The miniaturization continues and so there is a new class of bare board cameras without housing and only 6 mm thin. These cameras are good for size-restricted and cost-effective applications in the embedded field. These require a different infrastructure: computer on chip instead of PCs, ARM processor architecture instead of x86; Linux, Windows IoT or Android instead of Windows. Some setups can use the USB 3.0 interface, but MIPI and LVDS-based interfaces allow more flexibility and compactness.

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!