Compression Technology & Industrial Cameras

Algorithms for image data compression follow the mechanisms of human eyesight and human image interpretation.

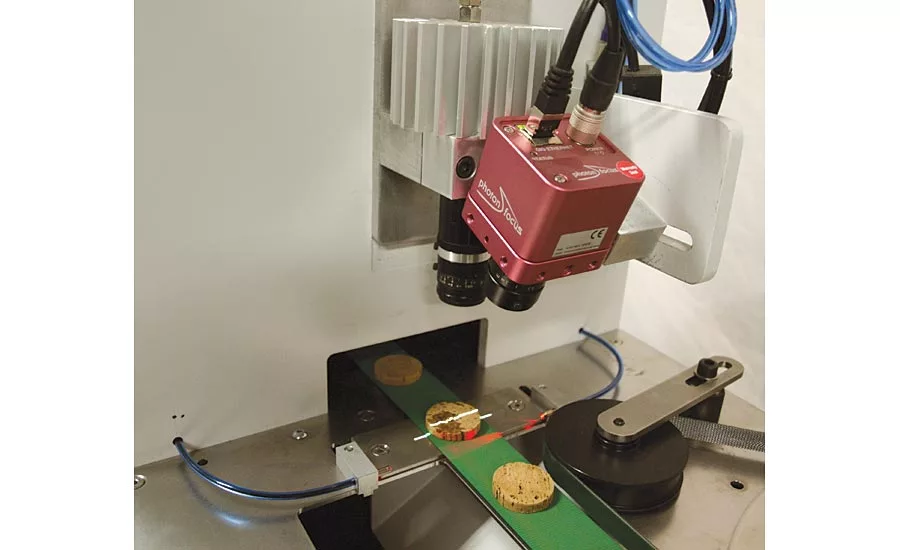

Cork Inspection. Combined color 2D/3D inspection with white LED line projector and a DR1 color camera. Source: M3C Industrial, Automation&Vision, Spain

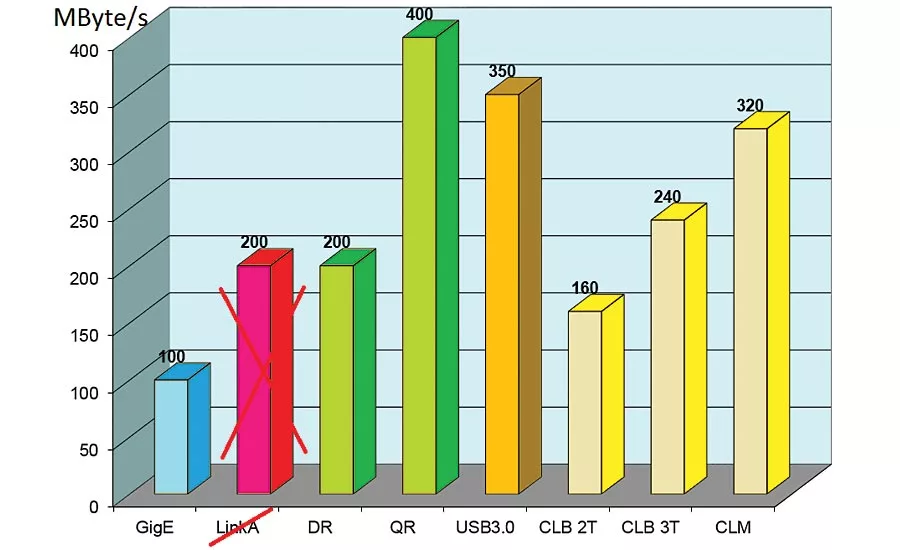

Comparison of transfer rates of different interfaces available for industrial image processing. (LinkA – GigE with link aggregation, DR – DoubleRate technology, QR – QuadRate technology, CLB 2T – CameraLink base dual tap, CLB 3T – CameraLink base triple tap, CLM – CameraLink Medium)

High bandwidth is essential when transmitting big data volumes in image processing systems. However, available interface technologies like GigE or CoaXPress are the bottleneck when talking about bandwidth. By pre-processing the image in the camera and applying data compression technologies this bottleneck can be mitigated.

When implementing data compression technologies in industrial applications the following questions arise: Are there suitable technologies available for industrial image processing? Are these technologies already established or are they only marginal to mainstream technologies? When investigating these issues, we discover that data compression technology is already all around us. Audio codecs (MP3, Opus, etc.), image codecs (JPG, JPG2000, JPEG-LS, etc.) and video codes for TV and security applications (MPEG, H.264, H.265, etc.) are ubiquitous in our daily life.

The introduction of data compression technologies for industrial image processing is inhibited due to reservations that important information could be lost due to the data compression. However, algorithms for image data compression follow the mechanisms of human eyesight and human image interpretation. They can successfully eliminate redundant or irrelevant data in images without reducing the relevant information needed for industrial image processing.

When implementing compression technology in industrial cameras you can rely on chips widely available in the consumer industry or using dedicated compression mechanisms available for FPGAs. A performance analysis of available dedicated chipsets has revealed that they cannot be successfully adapted to the high data volumes produced by the CMOS sensors used in industrial image processing. The requirements of industrial cameras for external memory and power consumption are too high. Even IP cores for FPGAs that are available and used in the consumer industry cannot be fully adapted to meet these requirements. Existing technologies already used in the consumer industry can only act as a guideline for the development of a suitable technology for industrial image processing.

What are the basic requirements for a data compression technology to be used in industrial image processing? Methods should work in real time with low latency and provide a good balance between image quality and utilization of FPGA resources. High image quality should be maintained by using mathematically and visually loss-free data compression. The methods should be adaptable to monochrome images as well as color images based on Bayer pattern. They should implement I-Frames (intraframe compression) for fast access on single images while processing them. External memory usage should be kept at a minimum to achieve low latency. Furthermore, the method should be able to be parallelized for usage with multi-tap CMOS image sensors. Another core requirement is to keep a steady compression rate independently of the contents of the image. Finally, the standardized requirements for transfer protocols for industrial image processing must be met. For GigE solutions the GigE Vision and GenICam standards are mandatory and must ensure compatibility for multi camera systems.

The first project-related implementation used a JPEG FPGA core. This core could be successfully used with a compression rate of 8:1 and 12:1. However, due to possible block artefacts of the JPEG compression algorithm when used with high compression modes, there are reservations in the industry to use this method. Mathematically loss-free compression methods are not feasible because achievable compression rates vary depending on the image contents. Even when utilizing a lot of memory, the method cannot guarantee to meet bandwidth restrictions when compressing images with high-frequency contents. Some loss-free compression methods utilize differential pulse code modulation (DCPM). The basic idea of DCPM is to encode differences in subsequent images. Most signals in an image are correlating with subsequent signals. These redundancies can be utilized for image compression. However, this represents a predicative method where the compression rate is highly dependent on the chosen predicate.

The DPCM implementation features a compression rate of 1.92:1 (thus called DoubleRate technology, or DR for short). This implementation can process monochrome images as well as color images based on Bayer pattern. The data output format is predefined and suitable for usage with the GigE Vision standard and for the storage of image data on standard storage media in personal computers. Image deterioration is prevented by periodically transmitting so called support points. By using a multi-tap FPGA implementation this method has a very good balance between resource usage and image quality. Decompression of DPCM compressed data is substantially easier than compression which enables real-time decompression in the CPU of the computer.

A lot of applications like multi-camera systems for motion analysis combine 2D and 3D inspection methods. Systems for goal line inspection at the soccer World Cup in 2014 in Brazil successfully used this method for image data compression and made this technology a success.

The DR technology was enhanced by developing the QuadRate (QR) technology. The QR technology guarantees a fixed compression rate of 4:1 and is best suited for high speed applications. It uses a patented wavelet based transformation codec with strict compression rate control. This technology is featured in the HD camera series QR1-D2048x1088-384 and other cameras for monochrome, NIR and color. At full resolution of 2040x1088 pixels these cameras perform at 169 fps. At a reduced resolution of 1024x1024 pixels a frame rate of 358 fps is achieved without any loss of information within the compressed images. These cameras are also suited for multi-camera systems and are network compatible without restrictions. These cameras are 100% compatible with GigE Vision and GenICam standards and are easy to implement.

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!