Measurement

The Definition of a Fool is a Drowning Man Who Tries to Keep It a Secret

A Story on Purchasing the Wrong Equipment and Keeping It.

Image Source: Lemon_tm / iStock / Getty Images Plus

If you were drowning and saw nearby people who could help, would you yell for help?

At a confidence interval of 99.99 % or higher, we must assume that almost everyone would yell, right?

However, what about those who purchased the wrong calibration equipment?

While the stakes might not always be as immediate as drowning, choosing the wrong calibration equipment can have similarly disastrous consequences.

Maybe they waste hundreds of hours using this equipment to optimize their process. What if that's a production decision that is used to accept or reject parts? What if these measurements reject 10 % of the production lot? Is that good?

In this scenario, someone is not physically drowning, though they might be slowly bankrupting the company that employs them. They may be contributing to a mass layoff, a poor financial outcome, or worse, passing a known bad product as good because they cannot measure it.

They could harbor suspicions about acquiring the wrong equipment, but there might be reluctance due to fear of management repercussions or ego concerns. Let's be honest – acknowledging mistakes is not a preference for many, yet those who can admit to errors often contribute significantly to any organization. Afterall, if we don’t acknowledge our mistakes, how can we expect to learn?

Think about those you know in quality roles, or if you're in such a position, consider how often you reevaluate your decisions.

Choosing the wrong equipment or ignoring proper calibration is like drowning in a sea of unseen problems. Poorly measured products can lead to massive recalls, damaged reputations, and even loss of life.

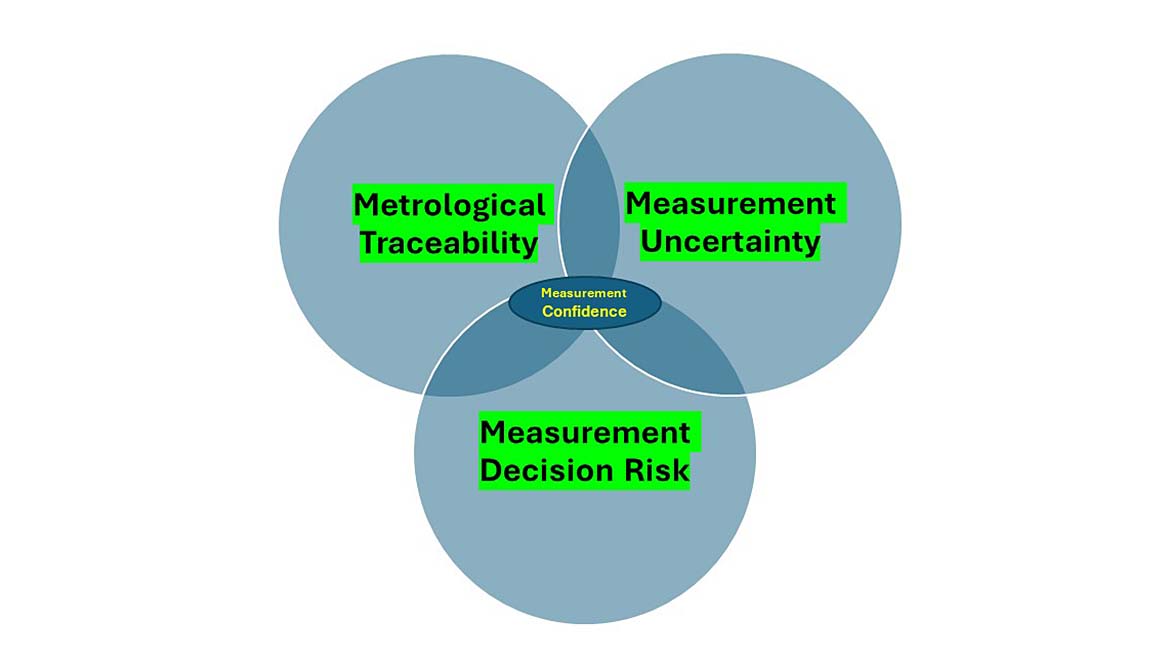

We can avoid these mistakes by addressing what is essential for a meaningful measurement, which involves following the three pillars of measurement.

Three Pillars of Measurement:

- Measurement Uncertainty: This involves how robust the evaluation of measurement uncertainty is as it helps support the next two pillars of measurement. This refers to the extent to which measurements can vary and provides an estimate of the confidence in a measurement result. It's crucial for decision-making as it helps users understand the reliability of a measurement.

- Metrological Traceability: This involves ensuring that measurements are traceable to recognized standards, traceable to SI Units, creating a clear and documented chain of measurement. In some cases, metrological traceability is indirectly traceable to the SI (e.g. testing laboratory measurement uncertainty).

Note: The attainment of metrological traceability in the measurements you conduct is contingent on the proper calculation of measurement uncertainty at every preceding tier leading up to the specific measurements being made

- Measurement Decision Rules: Decision rules determine whether a measurement result is fit for its intended purpose considering measurement uncertainty (more justification for robust evaluation of measurement uncertainty). These rules should consider acceptable risks (depending on the application), ensuring that measurement results are used to make conformity decisions.

When correctly addressed, these pillars ensure high confidence in measurement results.

Inaccurate, ill-defined conformity measurements can have serious consequences, such as engineering disasters, financial losses, and potential loss of life.

Measurements play a critical role in decision-making across various industries. When measurements are accurate, traceable, and have well-defined decision rules, it instills confidence in the results, leading to safer products and services and reducing risks for consumers and suppliers alike.

Both consumers and suppliers of measurement services should be aware of measurement risk and the importance of adhering to consensus standards and guidance documents for better decision-making. When measurement results ensure confidence, it reduces risks and ensures the quality and safety of products and services.

Bad measurements can lead to big problems. Just think about some of the disasters due to poor decision-making.

The BP oil refinery explosion in Texas, the Hubble telescope's focus issue, the Space Shuttle explosion, a Stealth Bomber crash, Cox Health's overdosing of 152 cancer patients, Paris Trains, and another BP oil rig disaster are all examples of tragedies that could have been prevented with better measurement practices.

The 2016 film Deepwater Horizon is an excellent film showcasing how a blowout caused an explosion, killing 11 people and a catastrophic oil leak.

Note: While several examples are extreme and represent worst-case scenarios, it's essential to recognize that numerous practical situations exist where effective decision rules prevent product recalls and enhance safety and can result in substantial cost savings for companies that employ them judiciously.

The essence of decision risk and conformity assessment is ensuring the final product's quality, accuracy, and durability. When decision risk is appropriately applied, manufacturers and society stand to gain billions of dollars over time by enjoying high-quality products that withstand the test of time instead of those prone to malfunction after a few years or months.

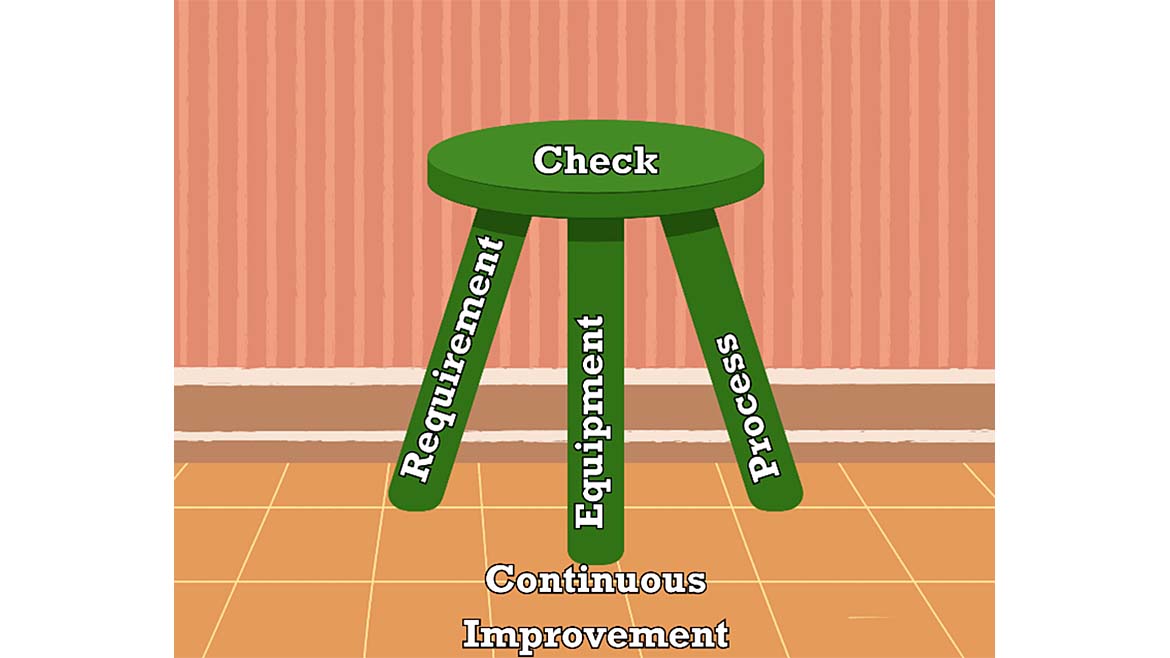

So, how can we avoid these issues? Here are five simple rules to reduce your measurement risk:

-

Understanding the Right Requirements: This first rule involves knowing what is needed to accomplish the task. The more accurate the system, the higher the costs to procure and calibrate the equipment. Buying the wrong equipment will often lead to more frequent calibrations, often costing more and reducing availability. The recommendation before any purchase is to discuss the intended purchase with those calibrating it. The results can be extremely costly. Most of these wrong choices could easily be remedied if metrology were consulted before the actual procurement.” Technicians often will know what equipment frequently fails calibration.

Note: All authors have observed and heard stories from others that the previous person bought the wrong equipment, and now we are stuck with it. Amazingly, some know the issues, management will not correct them, and others know how often the wrong choice of equipment occurs. - Purchasing the Right Equipment: Not all tools are created equal. Make sure you're using the right equipment for the job. This includes the appropriate measurement uncertainty and metrological traceability criteria using qualified calibration providers. Using the wrong equipment or calibration provider can lead to inaccurate measurements and more significant problems. Continually evaluate externally provided products and services to minimize the risks involved.

- Have the Right Processes: This rule requires a training program and proof of training (records) to validate the individuals calibrating or using the equipment. A process should be in place that ensures all aspects of the standards are being carefully satisfied in the calibration process, such as the use of the proper auxiliary equipment (test adapters, leads, etc.). The robustness of the process should include good practices such as control chart implementation and calibration interval evaluation.

- Check Your Work: Technicians are human, and humans make mistakes. Always double-check your measurements to make sure they're accurate. It's easy to overlook a small error, but that small error can have big consequences. So, take the time to verify your work. A good verification and validation process will aid, as well as a good checklist.

- Stay Vigilant: Don't let success make you complacent. Always be on the lookout for potential risks and take the necessary precautions to prevent them. Control chart monitoring processes are a good practice for ensuring and monitoring these deviations. It's easy to let your guard down when things are going well, but that's when mistakes can happen. So, stay alert and stay safe.

The first three requirements are the legs of the stool. If one is neglected, it will be hard to sit on the stool. Checking the work helps ensure accuracy. The floor or support structure is continually improving to keep everything in place.

Building trust in your measurements: Control charts, proficiency testing, and the cost of errors

Strong foundations are essential, and a robust measurement assurance program (MAP) is key in the world of measurement. This program leverages control charts and a well-defined proficiency testing (PT) or interlaboratory comparison (ILC) plan to ensure the accuracy and reliability of your data.

The goal? Safe and effective products. By following established guidelines, you'll gain clarity on the necessary equipment, calibration standards, and procedures needed to validate the uncertainty associated with your measurements.

Remember: Early detection is crucial. An error missed at one stage can be ten times more expensive to fix later. Implementing checks and balances through a MAP minimizes this risk.

Cutting corners can be costly. Choosing the wrong equipment, whether a custom solution or an unsuitable commercial option, can lead to significant financial losses. Saving $15,000 on equipment might result in $1 million worth of scrap products due to inadequate control.

So, how do we translate measurement risk into financial terms?

JCGM 106: 2012 provides valuable guidance on using measurement uncertainty to mitigate risk and improve yield. However, effectively utilizing these tools requires a proper understanding of how to perform the calculations.

There is an example in section 9 that uses manufacturing a precision resistor. The example goes on to say the conformance probability is around 90 %. Thus, 10 % would be nonconforming.

Imagine your company is making a product and having to reject 10 % of everything produced. What if you could follow the above rules and guidance to limit the reject rate to under 1 %? What would you save by doing so? Whatever your present failure rate, there is likely a solution involving better measuring equipment and process improvements that can lower the risk of false rejections.

We tend to observe companies that have purchased something; someone wants to validate their purchase by continuing to use that equipment (drowning without asking for help). Measuring and testing equipment can be a large expense, and once something is purchased for whatever reason, the determination to continue using it can be large. Management will often provide too much friction to justify purchasing something better when a cheaper alternative seems good enough.

Let's examine this hypothetical case study inspired by the JCGM resistor example to prove our point, which unfortunately represents a common issue impacting numerous companies globally, potentially leading to billions in lost revenue.

Case Study: Cost Cutting Leads to Quality Issues in Resistor Manufacturing

Background:

This case study describes a scenario where a manufacturer of resistors faced challenges due to prioritizing cost-cutting measures over ensuring accurate measurement processes using the appropriate measurement capability index (Cm), also known as Test Uncertainty Ratio (TUR).

Problem:

The company needed to manufacture resistors with a tolerance of 1,500 ohms ± 0.2 ohms (approximately ± 0.013%). To minimize costs, they opted for a mid-tier digital multimeter with a resolution of 7 ½ digits, assuming it would be sufficient for their needs.

Using Global Risk Calculations to Solve the Problem:

Being cost-efficient while providing the highest manufacturing yield is not mutually exclusive. Both are within reach if the process is appropriately vetted while still in the planning stages. We will take a hypothetical look at the potential rejection/scrap rate of manufacturing a simple resistor. This resistor isn't particularly interesting and has rather unimpressive specifications:

Nominal value = 1500 Ω

Specification = ±0.2 Ω (±0.0133 %)

Expected in-tolerance probability (itp) = ~90 % (assumes roughly 10% will fail)

Expected process uncertainty = ±0.12 Ω

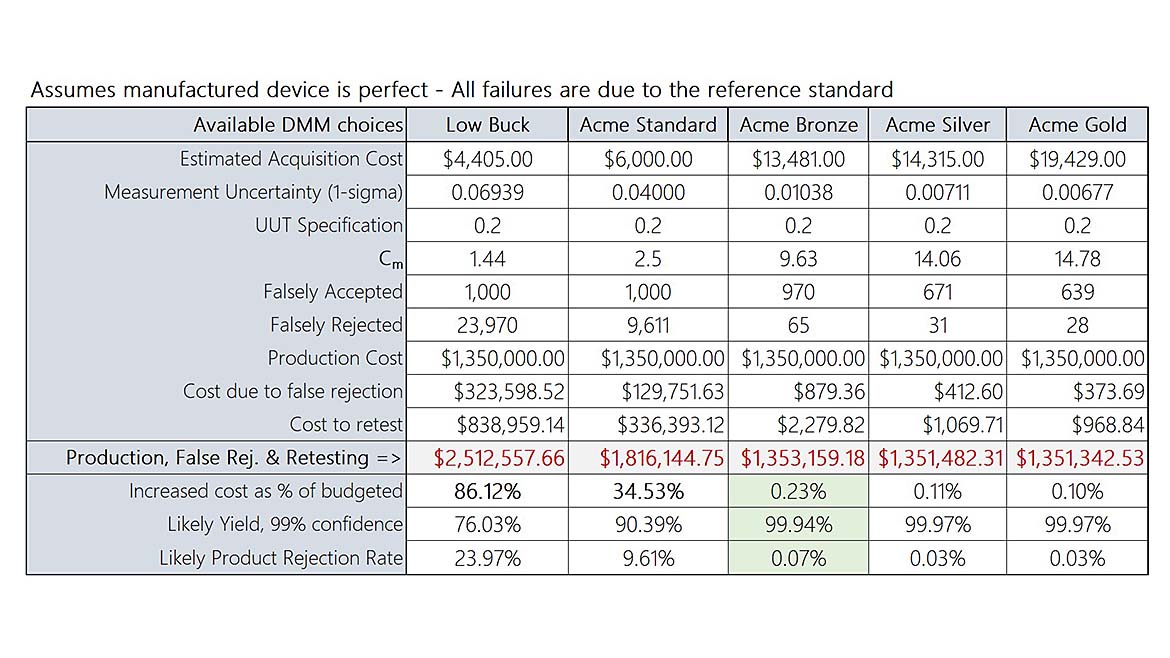

Measurement Capability, Cm (a.k.a TUR) = 2.5 (±0.04 Ω measurement uncertainty)

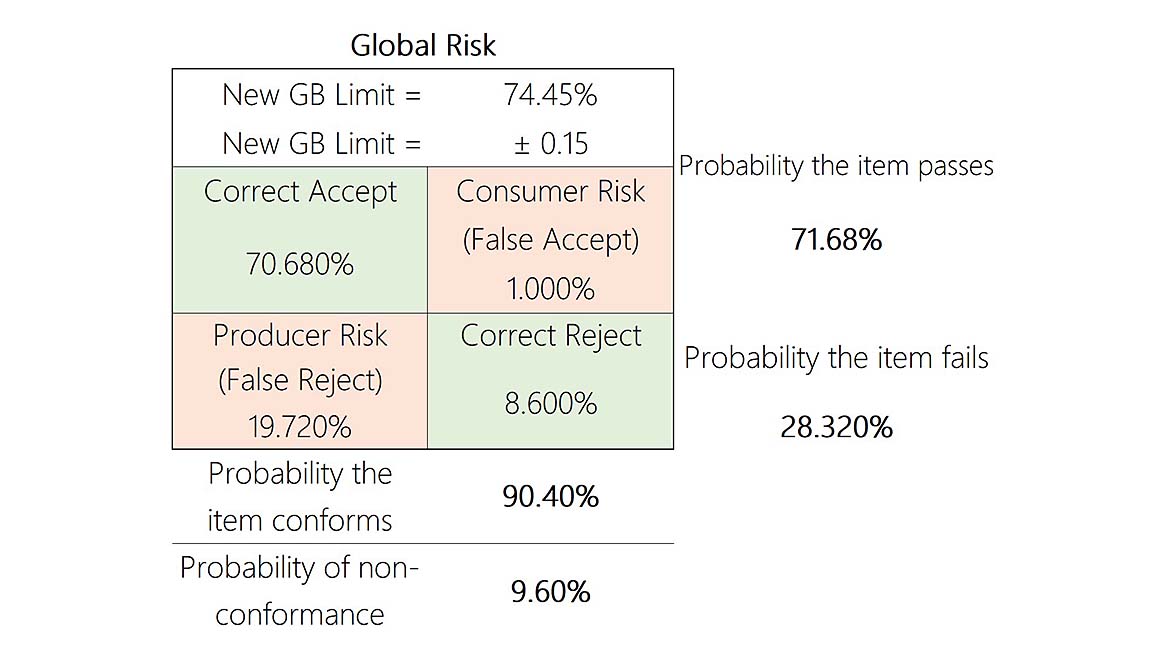

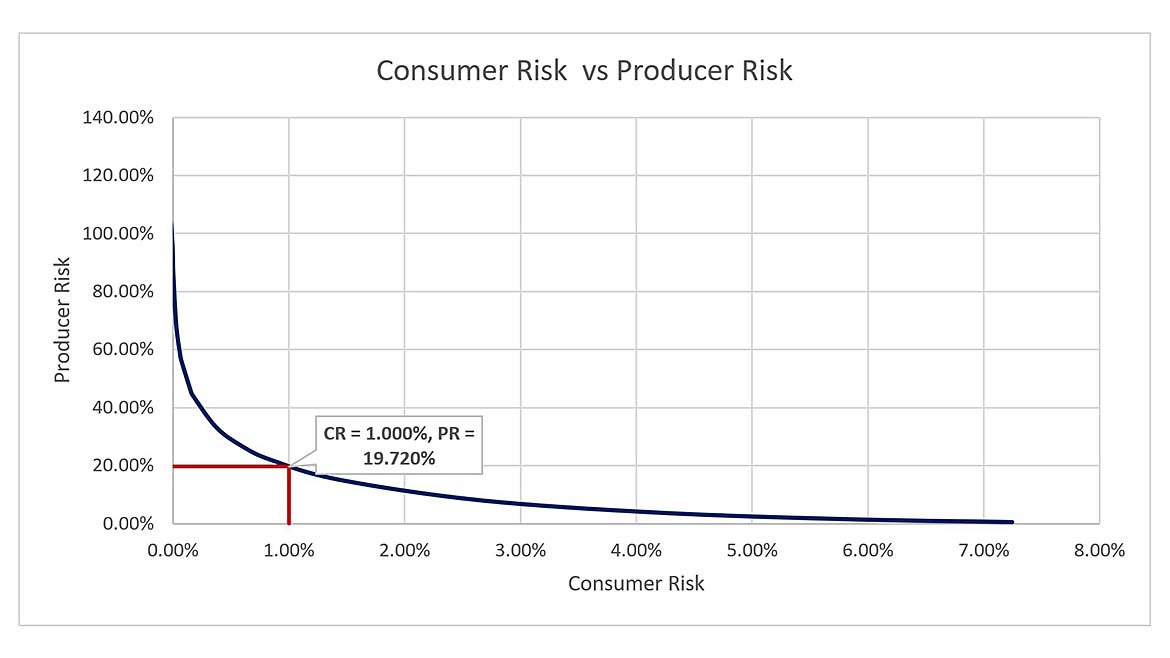

The manufacturer has a high-speed Digital Multimeter (DMM) previously purchased, and a decision was made to utilize this instrument instead of making a new purchase. The monthly yield is expected to be about 100,000 pieces. Instead of purchasing new equipment, the manufacturer decided to reduce the acceptance criteria specifications from ±0.2 to ±0.18 utilizing a Guard Band to keep the Probability of False Accept (PFA) to 1 % or less as seen by the consumer. Without reducing the acceptance specifications to ±0.18, the consumer would likely see ~10 % field failures.

The cost to manufacture and test each one of these resistors is $13.50, while the cost to retest before scrapping is $35. Before going further, the reader may wonder why the cost of retesting the failed production item is significantly higher.

There are several reasons for the cost increase: the time taken to remove the failed item from the line, filling out the retest tag, transporting it to the higher-level lab, technician time/salary performing the additional measurement, the final disposition of the item (did it indeed pass/fail), and the return to production or scrap.

This is where Global Risk calculations can shine, shedding light on the production process and justifying the purchase of higher-quality processes and/or instrumentation. Taking what we know, stated above (specs, tolerances, and cost), the failure/scrap rate can be accurately predicted. If the manufacturer continues with the plan to build these resistors as-is, with a total process uncertainty of ±0.12 Ω, the metrics will likely be:

So, how does this translate into lost profit? From the chart above, we see the following:

|

Resistors Produced = |

100,000 |

Correctly Accepted = |

83,604 |

|

Cost per item = |

$13.50 |

Falsely Accepted = |

1,000 |

|

Cost to retest = |

$35.00 |

Total Accepted = |

84,604 |

|

Initial Build + Testing Production Cost (100,000 x 13.5) = |

$1,350,000 |

||

|

Falsely Rejected = |

6,838 |

||

|

Correctly Rejected = |

8,558 |

||

|

Total Rejections = |

15,396 |

Cost due to False & True Rejections = |

$207,846.48 |

|

The number of actual rejections and false rejections are all-inclusive. Therefore, all rejections are scrutinized by retesting them at a secondary inspection station. | |||

|

Cost to retest (15,396 x 35) = |

$538,876.81 |

||

|

False reject + retest cost (additional cost) = |

$746,729.29 |

||

|

Total cost for (1) + (2) + (3) = |

$2,096,723 |

||

|

*Making matters worse, retesting found 8,857 (55.58%) that initially failed, passed secondary inspection costing ~$310,000 needlessly. | |||

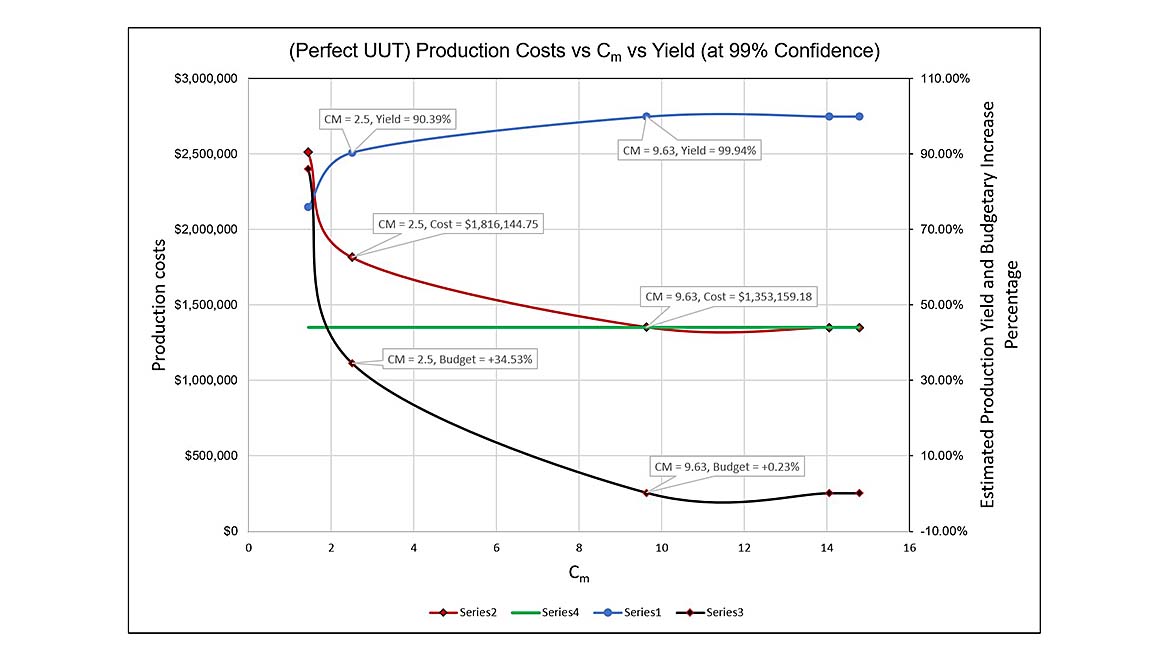

A very large part of this equation is the reduced Cm of 2.5. Although the actual build process of the resistor alone may contribute significantly to the rework cost (itp ~90 %), how could selecting better test equipment help identify true problems? As stated, the itp (In Tolerance Probability) was expected to be ~90 %, but this number may be completely underreported due to poor instrumentation. The graph below illustrates our baseline assumptions.

The higher the Cm (9.63), the higher the yield (99.91 %), with minimum capital going over base budget (+0.23 %). Going the other way, the lower the Cm (2.5), the lower the yield (90.1 %), and the increase over the base budget (+35.53 %).

There is more going on than simply exchanging DMMs. Perhaps there is a throughput (speed) issue or concerns about the overall maintenance of a higher echelon reference DMM.

This demonstrates that by taking the uncertainty of the reference equipment into account before the decision is made to put some test system into operation, virtually all new sources of error will likely be due to the UUT itself. Taking the reference system uncertainty into account will likely save hundreds of thousands of dollars once the decision to produce is greenlighted.

Note: These calculations are based on the manufacturer's specifications and do not consider EOPR.

Miscalculation:

The company calculated a Measurement Capability Index (Cm) of 1.44:1, indicating their measurement process might not reliably distinguish between conforming and nonconforming products.

However, they disregarded this concern and assumed a narrower acceptance zone of ± 0.12 ohms instead of the specified ± 0.2 ohms. This significantly reduced the probability of products falling within the acceptable range.

Consequences:

High rejection rate: Around 30% of resistors failed the global tolerance test of ± 0.12 ohms during production.

False rejections: Upon further investigation, the metrology lab confirmed that less than 0.2% of the rejected products were indeed outside the specified tolerance. This signifies many unnecessary rejections due to an overly stringent internal acceptance zone.

Root Cause:

The primary cause of this issue was prioritizing cost savings over ensuring accurate measurement capabilities. Choosing an inadequate measuring instrument and implementing an unrealistic acceptance zone led to unreliable quality control and excessive product rejections.

Lessons Learned:

Prioritize measurement accuracy: Selecting appropriate measuring equipment based on the required precision is crucial for reliable quality control.

Understand measurement uncertainties: Calculating and interpreting the Measurement Capability Index helps assess the process capability and identify potential issues.

Set realistic acceptance criteria: Acceptance zones should be established based on actual measurement capabilities and industry standards, not solely on cost-cutting efforts.

Invest in proper calibration: Regularly calibrating measuring instruments ensures accuracy and reliability.

Conclusion

In a similar case where Greg worked with one of his clients to fix the reject rate, the company ended up saving millions and the person in charge has since been promoted several times. Thus, making the appropriate changes does not go unnoticed.

There are inadequate measurement practices going on all across the world, and sometimes investing in better equipment can save millions year over year.

The case study presented a cautionary tale of how prioritizing short-term cost savings over robust measurement practices can lead to significant financial losses in the long run. The bitterness of poor quality remains long after the sweetness of low price is forgotten.

By neglecting to:

- Select appropriate measuring equipment.

- Accurately assess measurement uncertainties.

- Establish realistic acceptance criteria.

- Invest in proper calibration.

The company incurred substantial costs due to:

- Excessive product rejections

- Unnecessary retesting

- Potentially undetected nonconforming products

This scenario exemplifies the importance of the three pillars of measurement:

Measurement Uncertainty: Understanding the extent to which measurements can vary and providing an estimate of the confidence in a measurement result.

Metrological Traceability: Ensuring that measurements are traceable to recognized standards creates a clear and documented measurement chain.

Measurement Decision Rules: Determining whether a measurement result is fit for its intended purpose, considering measurement uncertainty and acceptable risks.

Companies can safeguard their financial well-being, product quality, and brand reputation by adhering to these principles and avoiding the temptation to "drown silently" with inadequate measurement practices.

Remember, investing in accurate measurements is an investment in quality, efficiency, and profitability that might save you or your company from drowning.

If anyone wants a copy of our Decision Rules Guidance Document with formulas on how to apply this use case and much more, Greg Cenker can be reached at [email protected] and Henry Zumbrun at [email protected]

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!