Smart Camera Evolution

The evolving capabilities of cameras with onboard intelligence are creating new opportunities for the industry.

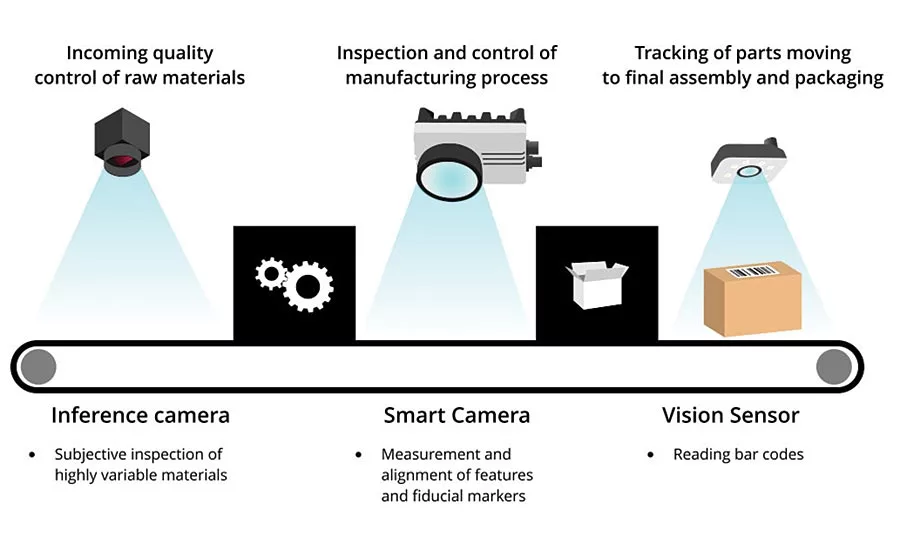

This shows an example of how inference cameras, smart cameras and vision sensors could work together on the same production.

Smart cameras and vision sensors have been key tools for monitoring and controlling the manufacture and movement of products in industrial environments for many years. These devices integrate image sensors, optics and on-board processing to capture images, interpret them, and then output a result based on that interpretation. Recently, these devices have been joined by a new class of intelligent camera: inference cameras. This new class of camera uses neural networks trained with deep learning methods to classify and locate objects. This article provides an overview and comparison of these different types of cameras and how their evolution is expected to impact the design of industrial systems.

Smart Cameras and Vision Sensors

Vision sensors are typically less powerful and flexible than smart cameras. They are configured for use in specific applications by adjusting a limited set of parameters. These applications include barcode reading, or checking the presence and/or absence of a feature. Smart cameras are more powerful and flexible, but they require more advanced programming to solve their tasks, which are much more complex. Both vision sensors and smart cameras can often interface directly with external systems, including programmable logic controllers (PLCs) using the RS232 serial data interface. Many can also connect to PCs using an ethernet interface.

The divide between vision sensors and smart cameras is eroding, however. As smart cameras leverage developments in embedded system technology to gain increased computing power, vision sensors have also become more powerful and flexible. App-style programming is enabling vision sensors to move from simple code reading to detecting the presence or absence of parts, completeness and positioning.

Smart cameras and vision sensors use a rules-based approach to execute their inspection tasks. This approach is well suited for straightforward tasks such as detecting fiducial markers, reading bar codes or taking measurements. Rules-based solutions to vision problems are less suited for complex subjective inspection tasks or tasks where many different and potentially opposing variables must be balanced against each other.

Rise of the Inference Cameras

A new class of camera with onboard intelligence has recently emerged: the inference camera. Rather than programming a vision application with traditional machine vision software, inference cameras use pre-trained neural networks to carry out inference. Using inference, these cameras can perform high accuracy object classification and tracking. Additionally, like smart cameras, these cameras deliver output results only, thus eliminating the need to send images to a host PC or board.

Neural networks are trained using deep learning and datasets of labeled images that provide examples for each class of object in need of differentiation. Then, the network teaches itself the myriad of criteria to base classification on. The complexity of the application will determine the amount of labeled training data required to achieve accurate results. A simple inspection application with tightly controlled parameters may only require fifty training images. Comparatively, a more complex application with greater variability may require thousands of labeled training images. Deep learning requires more computing power than is available on an inference camera. Typically, deep learning is carried on a PC with a powerful GPU, or uses a cloud computing platform. The trained neural network is then optimized and uploaded to the inference camera.

Inference cameras can bridge the gap between smart cameras and vision sensors, as well as machine vision cameras, which send complete images to a host system. Inference cameras can behave as a smart camera by capturing and processing images, and outputting results over GPIO. They can also send results as GenICam chunk data as well as send complete images to a host.

Impact on Industry

The evolving capabilities of cameras with onboard intelligence are creating new opportunities for the industry to solve more complex problems and improve efficiency.

Edge processing improves speed and security

Vision sensors, smart cameras and inference cameras are all examples of a trend toward edge processing. By moving image processing tasks away from a central server and toward the edges of a system, systems are becoming faster, more reliable and more secure as a result. When images are processed at their source, decisions can be made locally. This reduces latency by eliminating the need for complete images to be sent to a server where it would be processed and results transmitted back. Instead, only the result of the image analysis is transmitted to the server for statistical use. These results are generally simple strings of numbers or text which can be easily encrypted and transmitted far more quickly and use far less bandwidth than images.

Improving the efficiency of subjective inspection

Inference cameras will enable the automation of more complex or subjective inspection tasks not possible with smart cameras or vision sensors. In addition, the usual benefits associated with automated inspection, and the greater consistency achieved by having each inspection station running the same model will enable the detection of process drift much earlier. By eliminating the variance in inspection criteria from one human quality assurance inspector to another, trends are much more easily identified. Earlier detection enables earlier corrective action.

Conclusion

Today, system developers have more options than ever when it comes to the kinds of imaging devices available to them. The evolving capabilities of inference cameras with onboard intelligence will create new opportunities for the industry to solve more complex and subjective problems, improve efficiency and enable more processing at the edge. V&S

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!