The successful application of machine vision technology involves an intricately and carefully balanced mix of a variety of elements. While the hardware components that perform the tasks of image formation, acquisition, component control, and interfacing are decidedly critical to the solution, machine vision software is the engine “under the hood” that supports and drives the imaging, processing, and ultimately the results. This discussion will detail the various ways software impacts industrial machine vision systems and how it is applied to achieve a complete solution within different component architectures. We also will take a brief look at general design and specification criteria and current trends in software that might contribute to greater reliability in some machine vision tasks for industrial automation.

Background: Machine vision system and software architectures

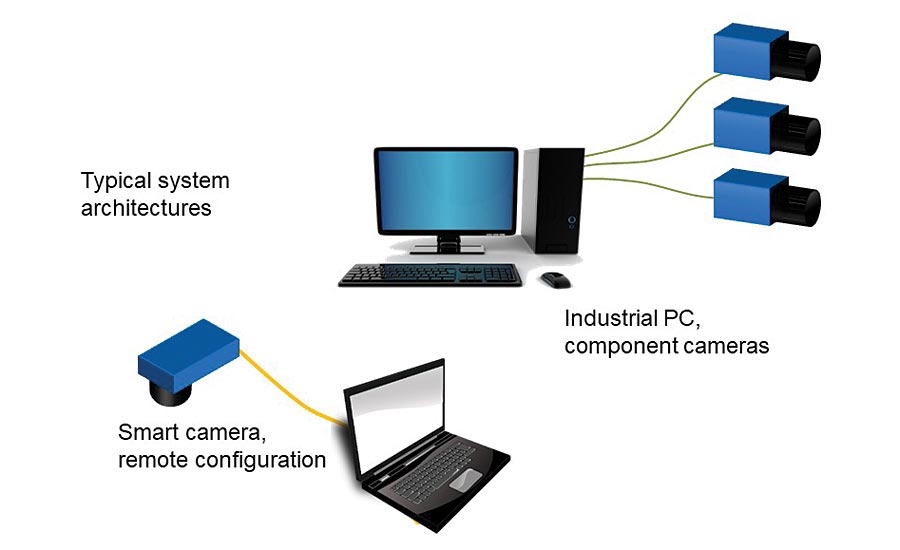

The diverse marketplace for machine vision technology features components and systems with widely varying architectures. While software is an essential part of any system, the “look and feel” of the software and the way it interacts with the components is different depending on the physical system architecture. Let’s start with a brief review of machine vision systems.

Many systems available for industrial machine vision feature a manufacturer-specific imaging device directly linked or tethered to a proprietary computing platform with a proprietary operating system. That might sound like a complex structure but what it really describes are the easy-to-use products commonly called “smart cameras” in the machine vision market. More simply put, smart cameras (and similar architectures) are completely packaged vision systems which include all imaging and computing devices needed to execute a machine vision task as a stand-alone component.

In contrast to smart cameras, other commonly implemented machine vision systems have a completely open architecture where a general-purpose imaging device (that is, a camera or sensor appropriate to the needs of the application) is linked to a standard computing platform running a commercial operating system. The interface from camera to computer most often uses one of several industry-standard image transfer physical protocols.

Configurable or programmable software?

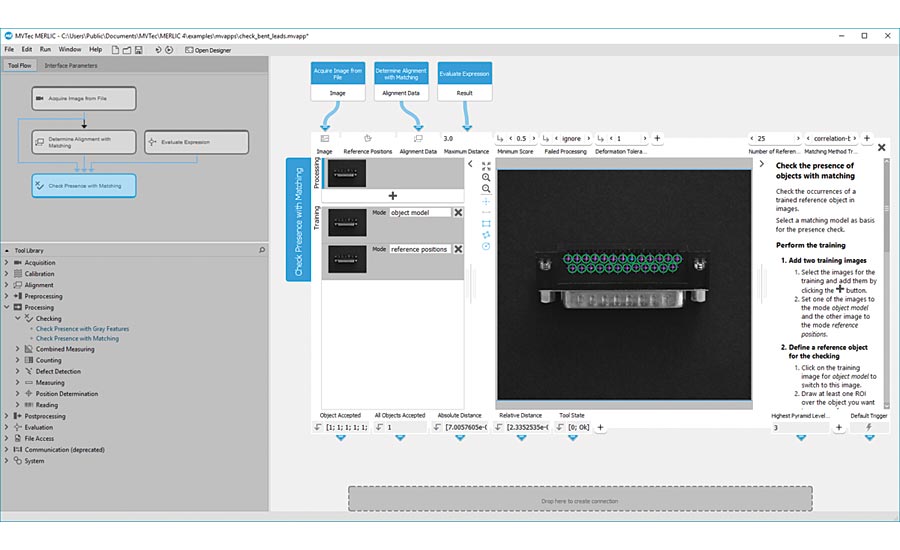

In terms of practical implementation, one construct for machine vision software can be described as an application that “configures” the system components and how they execute machine vision functions and tasks. These apps tend to have graphical user interfaces (GUI) devoted to “ease of use” with intuitive and graphically manipulated application configuration steps.

Smart cameras and systems using dedicated and/or proprietary physical configurations almost always feature software that has this fixed (though often quite thorough), configurable collection of tools that can be selected to operate in a constrained but usually user-definable sequence to execute a complete machine vision application. In the case of smart cameras, the configuration software usually runs on a computer external to the vision system. Other systems with proprietary computing platforms might have the entire graphical user interface built into the system, again with the software providing configuration of the application. Furthermore, “configurable” software applications are readily available for open system architectures running standard operating systems like Windows or Linux. Still, the function of the application is to provide a software platform in which the machine vision engineer can manipulate hardware and select/configure tools to perform an application.

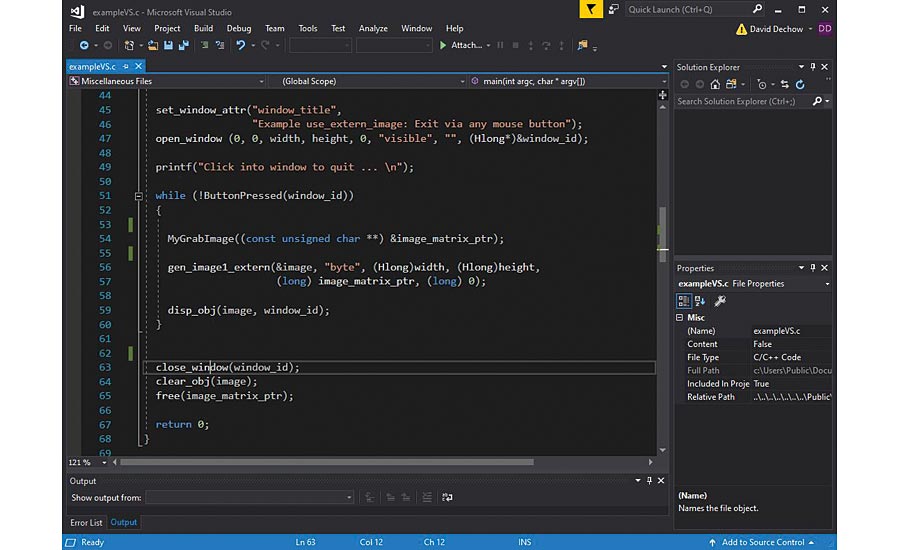

At the other end of the implementation spectrum is software designed for open architecture machine vision systems that is fully programmable. Typically targeting users with suitable levels of experience in computer programming in languages like C, C++, C#, .NET and others, these software products might be called “software development kits” (SDK) or “libraries,” and contain an extensive selection of low- and medium-level operators (“algorithms”) that when properly combined perform tasks ranging from very basic to extremely complex. In many cases also, libraries designed specifically for machine vision application development feature an integrated development environment (IDE) or even a configurable software application built on the underlying tools found in the library. These product extensions can make the development process much easier in applications that use these libraries without sacrificing the full functionality of the individual tool or algorithm, and in some cases offering a path to migrate the configured application code or script to a lower-level programming language automatically.

The role of software in machine vision systems

Typical machine vision applications in an automation setting range from inspection or defect detection to complex 3D metrology or robot guidance. In most implementations though, the fundamental tasks that must be supported in software are generally similar. To better describe the role of software in machine vision systems, let’s look at these tasks to learn what machine vision software does in each case and how it supports the system components.

Imaging and acquisition

The imaging device in a machine vision application is tied so intuitively to the machine vision task that the term “camera” is sometimes used to refer to an entire machine vision system (as in, “what kind of camera are you using for that inspection?”). Fundamentally however, all machine vision systems have at least one device – camera/sensor/detector – that is a discrete hardware component for image formation and manipulation. Virtually all these devices use dedicated electronics and firmware to manage the low-level sensing and image transfer, but for our discussion the important software functions are to access and control the parameters that configure how the device forms the image and provide methods to transfer and subsequently acquire the image on a computing platform.

In a smart camera or self-contained architecture, the software to manipulate imaging parameters most often is a part of the overall software package. In these devices, the transfer of the image to the processor (acquisition) is a function of the firmware minimal configuration necessary.

In open architectures, the camera manufacturer might provide an SDK and/or a standalone application that interfaces with the imaging device for the purpose of sensor/camera control. Since there is more flexibility in the imaging components in this case, manufacturers of cameras and frame grabbers commonly also provide an SDK and/or device driver to support the image transfer/acquisition in the computer. A user-written program or a configurable application provides the interface with the system to perform image acquisition.

Processing and analysis

The very core of every machine vision application is the software the performs the actual processing and analysis of the image. At this point specific software tools (“algorithms,” “operators,” etc. depending on the terminology used by the application or library vendor) are configured or programmed to perform specific analysis on the pixel-based data in the acquired image.

Whether programming at a low-level, or configuring an application, this development step is where the creative and sometimes difficult machine vision engineering takes place relative to the software component of the system. The developer selects and combines the tools necessary to execute the application. By way of further explanation, common tools found in most software implementations could be assigned perhaps to a few descriptive categories: preprocessing, blob analysis, edge analysis, search, matching, color analysis, classification, optical character recognition and verification (OCR/OCV), and bar or 2D code reading. In some cases, the tools might be categorized alternately by function: “inspection,” “measurement,” “reading,” etc. Overall, the tool selection varies widely by component or library with some libraries offering literally hundreds of individual operators. In both configurable and programmable software packages, tools or operators must be combined in order to execute a complete machine vision application.

Communications and results

Ultimately the machine vision system must interface with the outside world. The ability to receive production information or recipes from the broader automation system, and to provide results and statistical data to the process is critical to both the success and value of the machine vision system. A configurable machine vision software package often provides various communication and result processing tools, and at design time it might be important to consider whether the interfaces available in the software are well-suited for a specific automation environment. In programmable systems, the task of communication and results processing might be external to the actual machine vision software and handled by other software or code.

Specifying machine vision software

Before any discussion of software specification, it is important to emphasize that design and specification of imaging components including sensors, lighting, optics and computing platform relative to the needs of the application is critical to the success of the entire system. There is no shortcut in software that will make up for incorrect image formation or lack of processing speed or power. The important overall takeaway is that both the hardware and the software must meet the needs of the application. In this brief overview of software specification, we’ll presume that the targeted system already is designed to acquire a very high-quality image with correct resolution and feature contrast.

As noted earlier, in machine vision systems with a proprietary architecture like “smart cameras” the software is completely tied to the system in a complete package. The software is not scalable nor changeable. The software challenge then is to ensure that the available tools in the package perform the required tasks for the target application. Perhaps easier said than done. One recommendation that is always a good design step is to evaluate the component and software application with production sample parts to ensure performance.

The same is true for software libraries in that evaluation and proof of concept always is a best practice and the tool offerings must still match the application. With an open architectures though, there is opportunity for scalability both in components and software, even to the extent of changing or complimenting the selected library with additional software.

Where the process sometimes stalls or goes astray is when components and software are selected solely based on personal preference. One may prefer the GUI of a particular software package because it is familiar and has worked in the past. No argument that this may be an important consideration, but it should not override realistic evaluation of the capability of the system to successfully execute the required machine vision task.

Hot topics in machine vision software

“Hot topics” and “trends” are fun to read about and always create excitement. But unlike that latest car design, cell phone, or fashion statement, engineering is a disciplined undertaking where practical and dispassionate study and analysis of the available tools and solutions should overcome the hype of the moment.

The bottom line is that new machine vision software and components are being developed regularly. The best advice to consider is to look at new technology offerings not as an automatic solution to all projects but rather as tools that can be evaluated for a given application.

A few of the stand-out hot topics (there are plenty to choose from) in machine vision software for the beginning of this decade are 1) advanced spectral imaging using hyperspectral components; 2) computational (or “combinational”) imaging where multiple images are combined to better highlight features not well seen with a single illumination structure; and the use of artificial intelligence (AI) and/or deep learning in a variety of ways to provide greater ease of use in vision system configuration and/or the recognition of subjective changes in an image for feature or defect classification.

Research these and other new software technologies with the understanding that no single solution is suited for every application.

In conclusion, hopefully this discussion has helped clarify what’s “under the hood” in machine vision software and that it has helped ensure that your machine vision applications all will be successful and reliable now and in the future. V&S