What Every Machine-Builder Should Know About Component-Based 3D Vision Systems

Built-in robot integration gives smart sensors yet another cost and performance advantage over standard, non-friendly robot solutions.

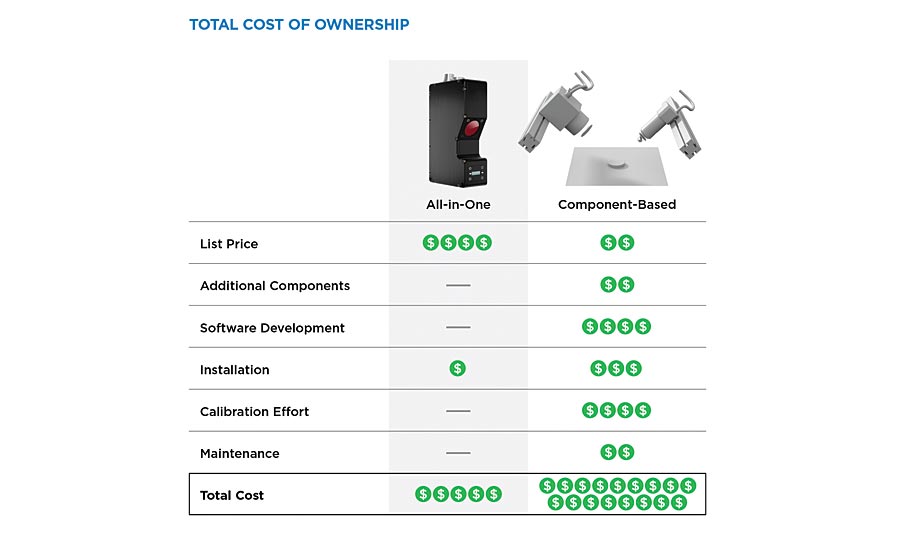

When it comes to building cost-effective 3D vision systems, is it better to use a component-based (i.e., camera, laser, lens, brackets, calibration targets) or all-in-one (i.e., smart) approach? At first glance it might appear that purchasing individual components at lower cost and building a custom vision solution is the more economical path. However, as you dig deeper into how these systems actually work you realize that hidden costs and time start to quickly add up.

The Reality: Custom Is Costly

The problem with comparisons between the component-based and smart vision approaches is they are often based solely on component price. In reality, component price is just one of several costs to consider when calculating the most important metric of all: total cost of ownership (TCO). TCO takes into account both the direct and indirect costs inherent in a given system and is therefore a more accurate reflection of that system’s true cost over time.

In component-based systems there are numerous additional costs that drive up TCO. These include accessories (cables, power supplies, external controllers, etc.), software development (development of firmware, 3D acquisition, 3D calibration, runtimes, measurement tools, user interfaces, communication protocols, third party library integration, etc.), installation time, in-field calibration and recalibration, holding inventory and production equipment for long term service and repair.

As a result of their complexity and the number of devices involved, component-based systems require additional integration time and effort, on top of the need for redesign when components become obsolete, or for designing and building specialized assembly and test jigs in order to achieve successful integration.

How to Make System Integration Easier

The component-based approach continues to impede widespread adoption of machine vision, as the effort required for integration and configuration drives cost higher. With a 3D smart sensor, however, there are only marginal costs to the initial sensor price in order to build a robust inspection system. Smart sensors come equipped with much of what the customer needs, with a fully integrated and easy-to-configure software, and allow an integrator or process engineer to focus on what they do best—building better automation solutions and improving product quality.

Basic cost comparison between the all-in-one and component-based approach to vision system design.

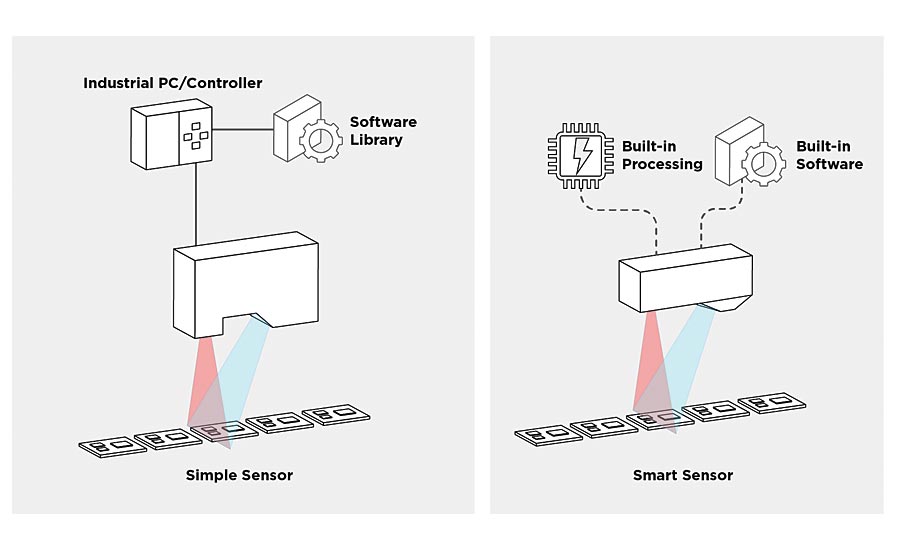

The tightly integrated hardware and software design in 3D smart sensors delivers better value over custom vision solutions. From a hardware perspective, smart sensors include a pattern projector (either lasers or DLP), specially aligned optics, camera chip, and embedded processor that converts images into 3D point clouds at a speed that keeps pace with the flow of parts in inline factory processes. For software, a smart sensor incorporates built-in firmware with a streaming design for performing real-time digitization, 3D model generation, measurement tools, pass/fail decision-making, and communication to external machinery at the shortest possible latency.

When using custom data acquisition approaches to build inspection solutions, an integrator must develop all the software. These systems will often be built using an industrial PC, frame grabbers, I/O cards, and third party libraries. Latency and potential data dropout caused by separating simple sensors from PC-based processing can compromise overall performance and reliability.

Making Multi-Sensor Layouts Simpler

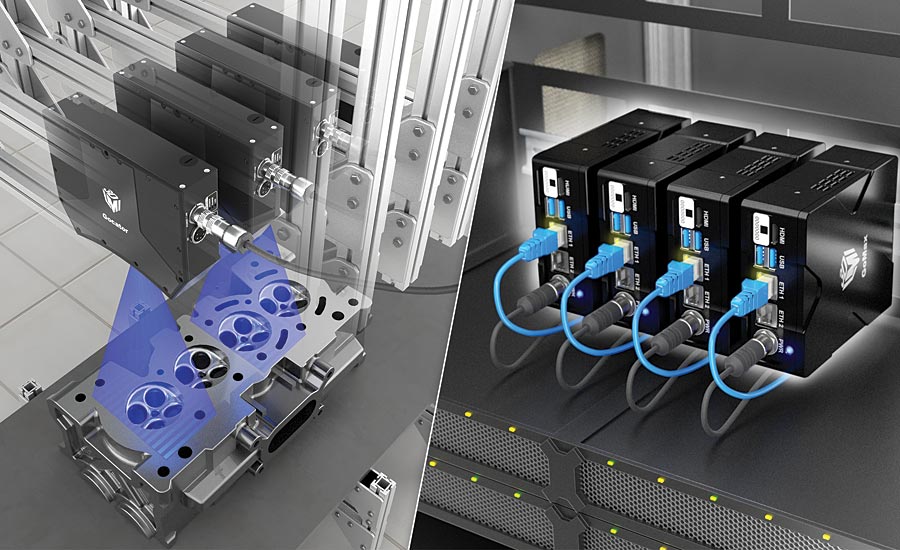

In applications where multi-sensor layouts are required to scan and stitch data before inspection can begin, component-based systems take on yet another level of risk and cost. Smart sensors, in comparison, are designed with built-in network synchronization and leverage distributed acceleration that readily handles scaling from a single sensor to processing data from tens of sensors.

Smart vision accelerators add massive GPU-driven processing power to a multi-sensor network in order to speed up cycle times and improve overall inspection performance.

Multi-sensor networking requires data concentration from multiple sensors, which often exceeds the computational power or data interface bandwidth of a single PC. Machine-builders can easily avoid the headache of designing strategies for this traditional PC-type processing by adding one or more smart vision accelerators to their multi-sensor system. Configurations like 360° ring layout or transverse web layouts are quickly and easily built out using a combination of smart sensors married to smart accelerators, whereas component-based systems of this nature can easily eat up six months or more in custom software development time requiring a rack of PCs and complicated synchronization methods.

Built-In Robot Integration Means Lower TCO

The reason 3D smart sensors are highly cost-effective is because they take numerous vision capabilities traditionally external to the sensor and integrate them onboard. And now their smart design includes support for robot integration.

The ability to work closely with robots is more essential than ever in today’s factories, as more and more manufacturers are streamlining their production lines by taking simple, repetitive tasks out of a manual process and using collaborative robots that perform the same tasks continuously, with greater precision and consistency. Since today’s industrial robots do not come standard-equipped with machine vision technology, they require third party machine vision to visualize the scene, process information to make control decisions, and provide instruction for next steps. In order to provide these critical functions manufacturers can now pair 3D smart sensors with their industrial robots to create a complete automation solution.

Smart 3D Robot Vision - How It Works

Machine-builders can easily mount a 3D smart sensor on a robot end-effector and use built-in hand-eye calibration to determine the sensor coordinate transformation to robot coordinates. This allows the position and orientation of an object detected by the sensor to be communicated directly to the robot.

Simple data acquisition requires multiple external components (left), while smart devices provide these technologies onboard (right).

The sensor also provides the tools to locate parts and communicates these measurements over strings to a robot controller using a TCP/IP socket. Critical X-Y-Z and angle information is communicated to the robot for use in vision guidance, inspection, and pick-and-place applications. The process of interfacing the sensor to the robot requires no additional external software or PC. Everything is onboard the sensor and working in a tightly integrated interplay between seeing, thinking, and doing. As a result, adding smart 3D vision to an industrial robot turns repetitive, fixed motion into smart movement and unlocks greater value for the machine-builder’s automation investment.

Conclusion

Today’s component-based approach to machine building, while initially attractive, is more costly in the long run and doesn’t scale. The smart 3D approach offers machine-builders faster time to market with less effort and lower total cost of ownership. Key automation functions and capabilities are included onboard the smart sensor (including onboard data processing, synchronization, and built-in support for multi-sensor setups and robot integration), minimizing system complexity and cost and freeing up more time and resources to build better 3D vision systems. V&S

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!