Like all computer technology, the speed, power and affordability of image analysis software for automated vision systems has improved exponentially since its advent in the 1970s, when the first algorithms simply counted pixels. Today’s image analysis software can read embossed metal lettering by seeing the character’s shadows and turning it into height information, or learn what a good part or bad part is simply by being shown several examples.

Perhaps most importantly for system integrators, many of these tasks can be accomplished without writing new computer code, thanks to pre-written algorithms packaged into complex tools.

“There are a lot of examples of codeless programming,” says Automated Vision Systems President Perry West. “You can drag and drop into a flow chart or diagram various algorithms or tools you want to combine. And you can usually adjust those tools and certain parameters you want to change.

“Codeless programming is really appealing to end users who don’t want to develop and maintain the expertise to do the coding and software development. And it appeals to many system integrators who want to turn the project around as quickly as possible.”

Brief History

“The very first or bread-and-butter vision tool was probably what we call the area tool, or the pixel counting tool,” explains Doug Kurzynski, project manager for machine vision technology at Keyence Corp. of America. “That’s where everything began, just simply counting pixels.”

The initial pixel counting tool worked by counting the number of pixels in a black and white image that fell within a certain range of lightness or darkness set by the programmer. Each pixel has a greyscale value between 0 and 255, with the highest number being white and the lowest being black. The programmer would set a range, perhaps between 100 and 200, and the computer would count all the pixels falling within.

“That tool still exists to this day, but one of the limitations was it’s a binary-based tool,” Kurzynski continues. “So if the lighting varies or if there’s uneven brightness, that can cause trouble. But it’s a great tool for just presence/absence.

“That evolved into color when color cameras began to become popular as well. It’s the same kind of tool, but you’d set a color range, and then everything outside of the color range was off. And it just counted all the pixels in the color range.”

Subsequent Tools

Engineers soon developed what’s known as the blob tool, still commonly used in today’s automated vision systems. Instead of just counting pixels, it counts groupings of pixels, or blobs.

“So say you had pills or something you were counting,” Kurzynski says. “An area tool would just give you one, over-all count of pixels, whereas blob tools will give you counts of individual groups. It will tell you how many pills are there. Each blob can be analyzed further. The number of pixels for each blob can be analyzed for roundness and all this other stuff.”

In addition to roundness factor, modern blob tools can calculate ratios, perimeters and a host of other data.

Soon, blob tools were joined by edge tools, pattern matching tools and then later, geometric pattern tools, among others.

Edge tools are commonly used for measurement — perhaps a spark plug’s gap, for instance, and are capable of sub-pixel processing down to the thousandth of a pixel.

Though, depending on the application, blob tools are also often used for measurement.

“If I want to measure the diameter of a hole, it’s probably easier to use a blob tool to [measure] the area, and I can easily compute the diameter from the area,” West adds.

Many of the early tools are still used, even as more advanced operations have become possible.

“Generally, once a tool has been found to work well, even if in a narrow context, there are others who will have a variation of that same problem for which the tool can be applied or adapted, says Scott Smith, director of technical services at Allied Vision Technologies Group. “The toolbox has been expanding over 25 years or more. While one may not reach for any given tool frequently, it’s good to have a comprehensive toolset from which to pick the right tool for the job. Consider the typical homeowner’s toolkit: most of us own an electric drill with screwdriver bits to create a power-driver; for hanging sheetrock or building a deck that’s the right tool. Yet we still reach for the handheld traditional screwdriver on occasion.”

Pre-processing

Many tools are used for more than one function and in different orders of operation depending on the task at hand. In one example, an edge tool might be used to locate the orientation of an object based on the angle of its edge, before a blob tool is used for measurement. In another, a pattern tool could be used for orientation, before the object is measured by an edge tool.

For those reasons, the terms “pre-processing,” “image analysis,” and “image processing” are often used differently from one engineer or company to another when describing segments of the process, West notes.

Regardless of which term is being used, even with optimal lighting and excellent cameras and lenses, images will typically need enhancing before they’re ready for measurement, flaw detection or other inspections.

As mentioned, pattern tools are often used to locate and orient a part before, for example, an edge tool is used to measure it. Often times this means the image will process through filters to increase the contrast between the light and dark pixels.

“A lot of times when someone is doing an inspection, the part isn’t fixtured nice,” Kurzynksi says. “Typically used for that is some sort of pattern search, to locate the pattern, or the part, first, and then use all the tools to where that pattern is found.”

Some segmentation of the image is also required, so the tools know which part of the image to analyze.

Other popular pre-processing tools are known as mathematical morphology.

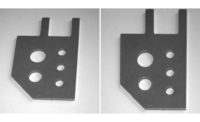

“Basically, it’s a pre-processing technique based on manipulating an object’s shape,” West explains. “The principal elements are erosion and dilation. Erosion kind of peels off the outer layer of pixels of an image. Dilation adds a layer to the object or image. And then you can combine these in various ways to do some very useful things.

“If I have small artifacts in the image that I’m not interested in, the pair of erosion followed by dilation is called an opening operation. It will get rid of small artifacts. If I do the opposite — a dilation followed by an erosion — that’s called a closing operation. If I have a part and it has some noise, and apparently some holes in it I want to get rid of, I close in those holes.”

What’s Next

“The holy grail of machine vison has been machine learning,” West says. “Machine learning can be subdivided into two categories: supervised learning and unsurprised learning. In supervised learning, we sit there and we teach the unit ‘OK this image is good or that image is bad.’ And we keep doing it until it gets it right. But in unsupervised learning we leave it alone and it figures all of it out.

“I think we’re a long ways away from unsupervised leaning, but supervised learning people are working on it, and yeah, there’s progress”

So while, unsupervised learning may be further on the horizon, some supervised learning products have made it to market, such as Keyence’s Auto Teach tool.

“Maybe someone hasn’t used vision before, has no idea what an area tool is, or what a blob tool is, but they know what a good part is. You run a good batch of parts through a system and its learning. It picks up all the image data you’re giving it. It creates a full color tolerance for each pixel, what a good range is based off the statistical data. And when you’re running this tool, any pixel outside of this range, it detects that it’s bad and then you can flag that part.”

Once software takes the next step to unsupervised learning, the opportunities would reach well beyond industrial machine vision automation.

“Facial recognition and self-driving cars, certainly, are going to push the envelope in terms of that,” West adds. “It’s just too hard to sit down and say ‘I’m going to write the code to anticipate every single situation out there on the road.’ In flaw detection, it would be great to say ‘here’s an image, and I don’t want to tell you how to find a flaw but I’ll tell you what’s there,’ and have the vision system figure out how to know which algorithms to use and what features to extract and how to make sense out of all of it.”