Vision & Sensors | Camera Interfaces

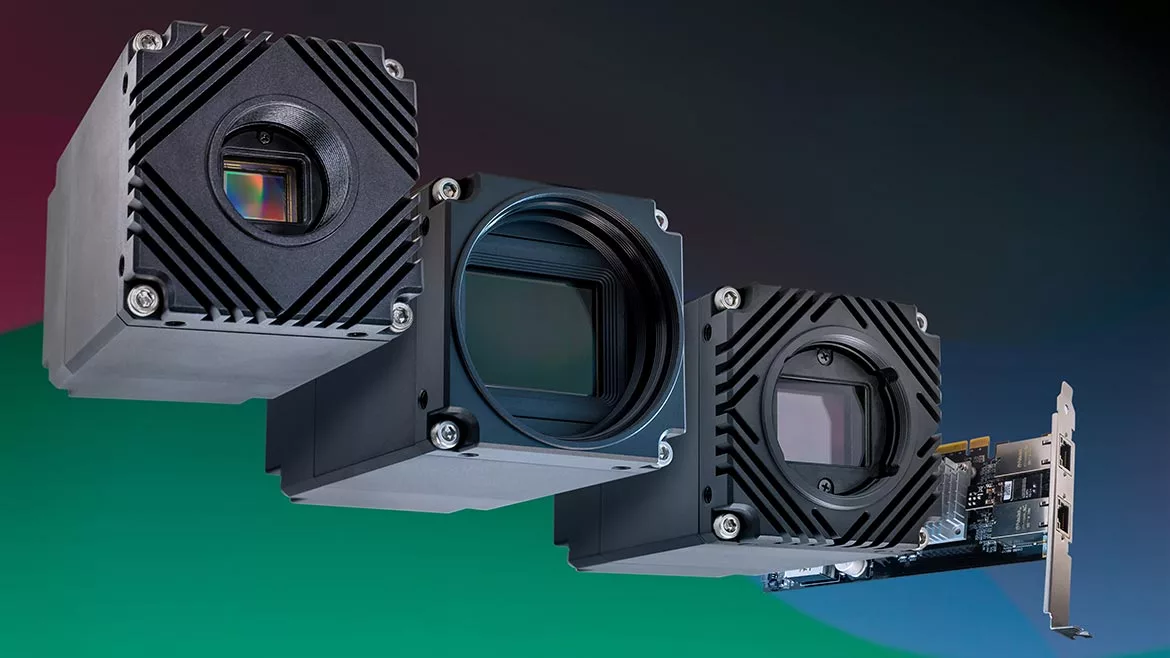

RDMA with 10GigE Cameras

High-speed, Maximum Reliability

All Images Source: LUCID Vision Labs

The landscape of high-speed data transmission is in a constant state of evolution, as new technologies emerge to meet the ever-growing demands of bandwidth-intensive applications. While the User Datagram Protocol (UDP) has been the preferred protocol for streaming data for GigE Vision cameras due to its efficient streaming performance and simplicity, it has limitations when it comes to flow control and packet retransmissions on higher Ethernet bandwidth cameras such as 10GigE and 25Gige cameras. Remote Direct Memory Access (RDMA) is a viable alternative for high bandwidth, multi-camera applications, offering a more robust and efficient data transmission method. RDMA bypasses the CPU and operating system to store image data directly onto the host PC’s memory, making it the ideal choice for managing large amounts of data in modern high-bandwidth Ethernet camera applications.

Removing the CPU Bottleneck

The current implementation of UDP for GigE Vision was designed for 1 GigE bandwidth, and the reliability features missing from UDP are built into the GigE Vision standard at the application layer. This means the OS and CPU must monitor and manage the data stream for missing packets and interrupt the camera to retransmit missing packets. At 1 GigE speeds, host PC resources needed to monitor and resend dropped packets are readily available. However, for higher bandwidth cameras RDMA offers a better solution by enabling data movement between devices on a network without any CPU involvement on a per-packet basis. By implementing RDMA, network adapters can write data directly into the host PC’s main memory, bypassing the operating system entirely, and reducing CPU overhead.

RDMA was first implemented over InfiniBand, which rapidly gained popularity for use in high-performance computing applications. RoCE v1 was launched in 2010 as an open standard supported by the InfiniBand Trade Association to answer the need for dedicated InfiniBand switches. RoCE v2 was released in 2014 to provide routing across Layer 3 networks (IP) and flow control capabilities. Adapting RDMA over the Ethernet network enables fast throughput and low latency at all speeds of Ethernet networks, complete hardware offload of packet handling with no CPU involvement, a comprehensive ecosystem of industrial connectivity solutions, provides long cable lengths with power over Ethernet (PoE), and interoperability with various software and hardware vendors.

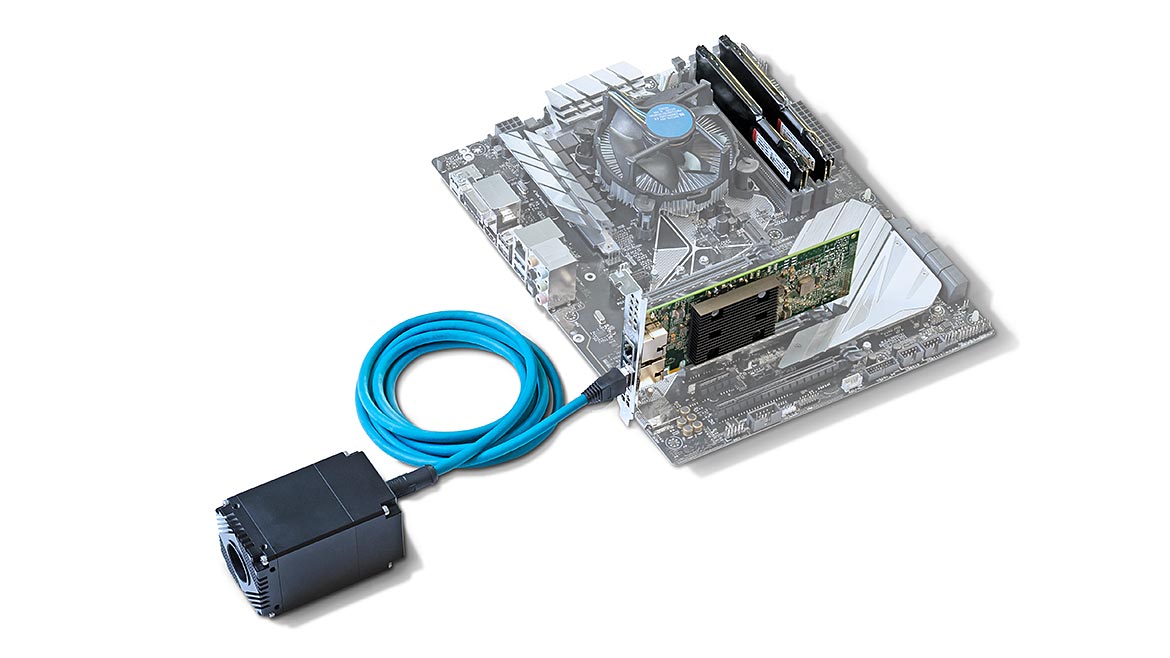

The Host Channel Adapter (HCA) manages RoCE operations, implementing in hardware all the logic needed to execute RDMA protocol. Data segmentation and reassembly as well as flow control are managed by the HCA allowing the sender and receiver applications to work with whole buffers. The RDMA channel is initiated by “pinning” host PC memory. A memory region is reserved on the host for RDMA usage, the necessary protections are applied, and then the host passes the address to the HCA and removes itself from the data path. This registered memory region can now be used for any RDMA operation.

Vision & Sensors

A Quality Special Section

RoCE RDMA transactions use three queues. The send and receive queues handle all the data transaction and are always created together as a queue pair (QP). The completion queue (CQ) is used to track the completion of the work scheduled on the QP. The QPs enables application-level flow control to notify the sender of available buffers for RDMA transfer on the receiver’s end. RDMA devices are programmed using RDMA verbs, which are low-level building blocks for RDMA applications accessed through API libraries such as Libibverbs API for Linux and Network Direct SPI for Microsoft Windows. There are two types of verbs: one-sided and two-sided verbs. One-sided verbs allow remote devices to bypass the CPU/OS when sending data, while two-sided verbs act more like traditional sockets that use the CPU/OS. We use two-sided verbs, which still remove several sources of CPU overhead compared to conventional Ethernet data transfers. Two-sided verbs are necessary for requeuing transfers and polling the CQ, which take up negligible CPU resources.

To implement RDMA over GigE Vision, the GigE Vision Stream Protocol (GVSP) needs to be adapted by establishing an RDMA stream channel using a reliable connection (RC), similar to a TCP connection. GVSP using RDMA does not require GVSP headers for the image payload transfer, and packet resends are handled by RoCE v2 HCA. Flow control is implemented by the camera retaining sent packets until the receiver acknowledges their reception (ACK), and multiple packets can be acknowledged at once. This reduces overhead and frees up CPU resources as all flow control and packet decoding is handled by the HCA running on dedicated hardware.

The Future is Fast

Through the utilization of the open standard network technology of RoCE v2, which was initially developed for high-performance computing applications, and adapting it to the GigE Vision standard, we are able to effectively overcome the challenges posed by UDP technology in achieving high bandwidth over 10GigE. As a result, the CPU load generated by image acquisition is decisively reduced, while also providing the lowest possible image delivery latency and the highest data throughput. Our own testing shows that streaming 4x Atlas10 10GigE cameras simultaneously results in a CPU utilization of only 0.08% compared to conventional GigE Vision UDP streaming with 5.38% CPU utilization. Thanks to RDMA, users who opt for Ethernet-based cameras for their high-bandwidth applications will benefit from faster and more dependable data transmission, further reinforcing Ethernet as the preferred industrial interface for machine vision applications.

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!