A Closer Look at 3D Imaging

Capturing the third dimension can be done in many different ways, and each of the machine vision technologies available has its pros and cons.

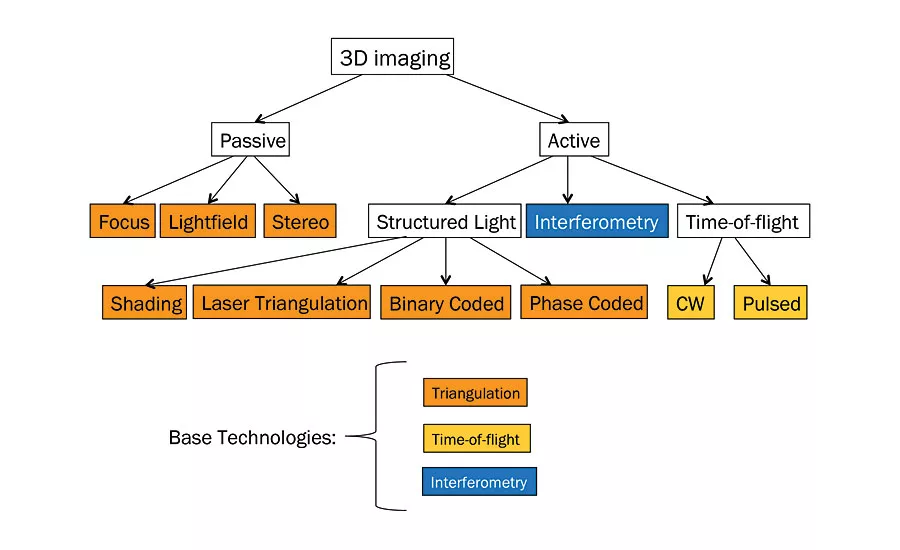

Chart 1: This map of 3D technologies illustrates the different types of 3D imaging by whether they are active or passive, and whether they use triangulation, time-of-flight, or interferometry. Source: SICK

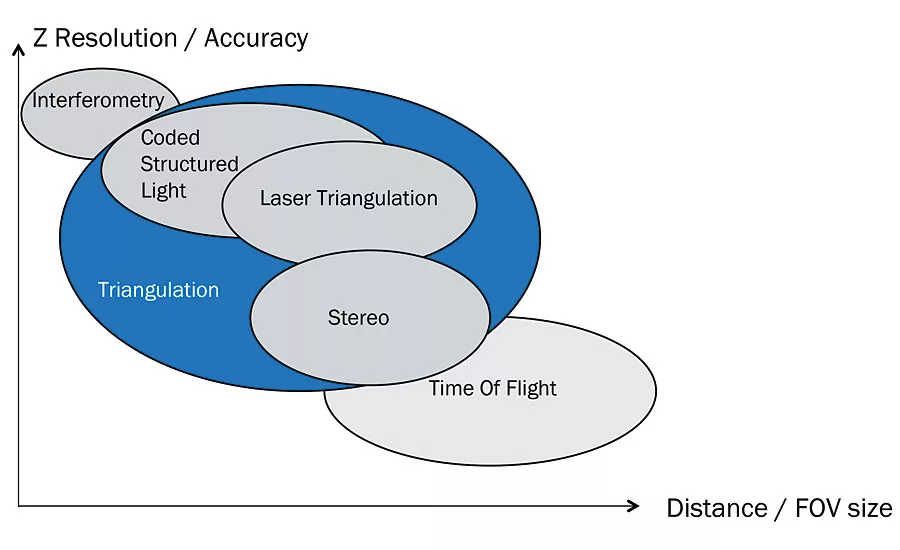

Chart 2: This chart shows how several 3D technologies compare when considering Z resolution and accuracy versus distance and field of view (FOV). Source: SICK

There are often many possible ways to solve a specific vision task. In some cases, the choice of either 2D or 3D vision is obvious, but in other cases both technologies could work though each provides certain benefits. It is important to understand these benefits and how they apply to a given application in order to provide a reliable machine vision solution. In general, 3D is best suited not only for analyzing volume, shape or 3D position of objects, but also for detecting parts and defects that are low contrast but have a detectable height difference. The third-dimension is mainly used for measuring, inspecting and positioning, but there are also cases where 3D is used to read imprinted code or text when contrast information is missing.

Capturing the third dimension can be done in many different ways, and each of the machine vision technologies available has its pros and cons. Three-dimensional imaging can be broken into two main categories: passive and active. From there it can be broken into much more specific techniques. Passive techniques include depth from focus, light field, and stereo. The main active techniques are based on time-of-flight, structured light, and interferometry.

Three-dimensional imaging can be further broken down into how the image is actually acquired, including snapshot and scanning methods.

SNAPSHOT TECHNOLOGY

There are both active and passive systems that use the “snapshot” method. This is the technique that most people are familiar with as it is the method used in the cameras they have used for years. The snapshot method captures all the pixels of the image at the same time creating the snapshot image. In the case of passive stereo, there are multiple images captured from different perspectives, and the difference between the images is used to calculate the distance to the object (think human sight). This same solution can be accomplished with a single camera as well, if the camera can be moved to multiple locations.

An active snapshot technique would include time-of-flight, which measures how long it takes for the light to travel to the target and return to a sensor array. In this way, each pixel directly gets a 3D measurement, and only one sensor is needed. There are variations of the snapshot technique like the active option coded light projection. In this case, several high-speed images of the same object are captured as the lighting pattern changes. Then a composite image is created showing depth based on the differences in illumination patterns. Typically the patterns are a series of increasingly narrow lines; finer and finer lines are used to achieve greater resolution.

SCANNING TECHNOLOGY

Then there are scanning techniques like laser triangulation where a laser light is projected on the object, and the camera captures (typically high speed) images of the light projection as the object moves through the scanning area. A different type of scanning technique is depth from focus. Three-dimensional data is created grabbing a sequence of images over a preset range. The stack of images is scanned through to see where the local focus is maximized, and range is derived from this. This method, while beneficial in that no structured light is needed, can be slow, and the object needs to have some structure to estimate the focus.

In a scanning technology, 3D images are acquired profile by profile either by moving the object through the measurement region or by moving the camera over the object. To obtain the correct 3D data and thus a valid 3D image, the movement must be either constant or well-known, e.g., by using an encoder to track motion. The created 3D images are usually very accurate. Snapshot technologies create a complete 3D image of objects by taking a single shot just like a typical consumer camera, but in 3D. Object or camera movement is not necessary, but the technologies produce images that are not as accurate as scanning technologies.

The 3D imaging technologies described in more detail below are laser triangulation (scanning), time-of-flight (snapshot) and stereo (snapshot).

LASER TRIANGULATION

Laser triangulation uses a laser line and a camera to collect height profiles across the object. The profiles are put together to create a 3D image while the object is moving. Since the height profile acquisition requires object movement, the method is a scanning technology. Laser triangulation has higher measurement accuracy than time-of-flight, but it has a more limited measurement range. In addition, occlusion is possible where the camera cannot see the laser when it is hidden behind an object. Typical applications include log/board/veneer wood inspection, electrical components/ solder paste inspection, and food and packaging inspection applications. Below are some of the key advantages and limitations of laser triangulation:

Advantages:

- No need for ambient light

- High detail resolution and accuracy

- Relatively short measurement range

- “Micrometer to mm” resolution scalability

- No additional scanning needed for moving objects

Limitations:

- Occlusion (shadow effects)

- Laser speckles

- Not suitable for large outdoor applications (~ > 1 m FOV)

- Not snapshot

TIME-OF-FLIGHT

Time-of-flight (TOF) 3D cameras create 3D images by using snapshots. This means no object or camera movement is needed. The technology measures the time-of-flight of a light signal between the device and the target for each point of the image. By knowing the phase shift of the signal time arrival relative to the initial signal, the distance between the device and target can be derived. The result is an instant 3D image of the target. Time-of-flight is well suited for applications with a large field-of-view and working distance over 0.5 m.

Advantages:

- No need for ambient light

- Large measurement range

- Relatively low detail resolution and accuracy

Limitations:

- Z resolution > cm

- X-Y resolution

- Secondary reflections

- Fast movements

STEREO

Stereo imaging works similar to human vision and provides snapshot 3D images without the need for external movement. It combines two 2D images taken from different positions and finds correlations between the images to create a depth image. Unlike laser triangulation and TOF, stereo technology does not depend on a dedicated light source. However, to find correlations the two images need to have sufficient details and the objects sufficient texture or non-uniformity. It is hence suitable for applications with a large field of view and for outdoor usage. To obtain better results, one may need to add those details by illuminating the scene with structured lighting.

Advantages:

- Large measurement range

- Suitable for outdoor applications

- Can “freeze” a moving object/scene

- Real snapshot

- Good support in vision SW packages

Limitations:

- No structure - no data, which leads to illumination constraints

- Low detail level in X & Y – typically ~1:10 compared to pixels

- Poor accuracy in Z

- Limited depth-of-focus of camera

In sum, there are several methods to achieve 3D imaging; selecting one depends on which would be the most appropriate for a given application and its environment. Just as there are several ways to capture 3D data, there are a couple of different ways to process those images.

CONFIGURABLE VISION SENSORS

The simplest vision systems are self-contained cameras with built-in image triggering, lighting and embedded image analysis capabilities. Their configuration is simple and can be performed by any technically oriented person after a few hours of training. Important benefits of configurable vision sensors include:

- Stand-alone operation

- Parameter configurable

- Result output

- Intuitive GUI for easy configuration

- Rapid solution development

- Remote configuration via PC

- Embedded image processing

PROGRAMMABLE (SMART CAMERAS)

These cameras are in the mid-range of complexity. Like the configurable ones, the analysis is embedded in the device. The difference is that they are more flexible in hardware configuration and software programming. A few days of training are needed to become an application developer. Important benefits of programmable smart cameras include:

- Stand-alone operation

- Versatile user programming

- Result output

- Flexibility using tailor-made GUI

- Flexible solution development

- Remote configuration via PC

- Embedded image processing

STREAMING (PC-VISION)

The most flexible vision systems are PC-based. The cameras generate images while the analysis is performed on a PC. Expert application developers often prefer this type of system, since it allows full flexibility to create both customized system functionality and algorithms in demanding applications. Important benefits of streaming include:

- Data streaming camera

- External PC processing

- Versatile image acquisition

- 2D and 3D image data output

- Flexibility by development GUI

- Fully flexible solution development

- Remote configuration via PC

- Software development kit

- PC image processing

CHOOSING THE RIGHT TECHNOLOGY FOR YOUR APPLICATION

Measuring the third dimension provides knowledge about object height, shape, or volume, but often the requirements are hard to pin down. Requirements for 3D can be difficult for some users/customers to define as they are not used to defining things in this way. Therefore, working with customer to define requirements, while testing in an iterative process, can be a good strategy.

As with any vision project, defining requirements and acceptance criteria is imperative. That includes the idea that classification is never 100%. That means defining with your customer the impact of false positives (rejecting good parts) versus false negatives (accepting a bad part). The impact can be very different depending on the application.

With all of the different choices available for capturing and processing 3D data, it might seem overwhelming, but if you concentrate on the basics like field of view, distance to the object, speed of the application, speed of the objects being measured, and overall resolution required, you will find a technology to solve your application. Q

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!

.webp?height=200&t=1745868355&width=200)