Rapidly evolving, high-quality imaging technology is ushering in a new world of vision guided robotics (VGR) for manufacturers in diverse industries. While next-generation imaging systems are more adept than ever at providing the functionality needed to efficiently and economically fulfill unique application requirements, many decision makers are still trying to wrap their minds around the concept of VGR and how to best implement these sophisticated tools into operations to gain a competitive edge.

The Need for Vision Guided Robotics

Conventional blind robots work best when everything is consistent—where the part or object is always in the same place and positioned in the same fashion. However, as soon as an object varies in size, orientation or location, robots without special capabilities fail. Just like humans, robots need vision to help mitigate that randomness.

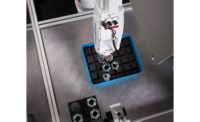

The use of vision guided robotics can alleviate this problem by finding the object and telling the robot where the object is located, allowing the robot to work more efficiently in an unpredictable environment. VGR encompasses the various technologies used to recognize, process and handle parts and objects based on visual data captured via special equipment such as cameras, lenses, lighting and the necessary software required to provide reference points to the robot.

Reference points, or coordinates, enable the robot to accurately pick and handle an object, providing the accuracy needed for many material handling applications today. These reference points are either X, Y coordinates for two-dimensional (2D) vision, or X-Y-Z coordinates for 3D vision, which are then used by the robot to accurately pick, handle and orient an object, regardless of its location as long as it is within the imaging system’s field of view.

Simple Steps to VGR Implementation

Traditionally, vision systems were expensive and unreliable, leaving many manufacturers discouraged and doubtful about the technology. While the need for more affordable, sophisticated imaging tools has been addressed in the recent past, many manufacturers still feel overwhelmed with the process of VGR implementation. The good news is that as software progresses to match the speed and diversity of camera hardware, vision technology continues to be easier to install, use and maintain.

Complete vision systems are becoming more integrated and prepackaged by vendors, relieving companies from having to assemble an erector set on their own, and sophisticated 2D and 3D machine imaging systems are effectively bridging the gap for manufacturers with unique demands. However, for decision makers still on the fence or feeling overwhelmed about the use of vision guided robotics, following a few simple steps may be helpful.

Fully integrated vision systems are growing in popularity and help early adopters gain a competitive advantage.

Define Goals

While it may seem simplistic, the key to successful VGR implementation for any company is to start with well-defined goals. Whether the desired outcome is better quality, increased productivity, greater flexibility, lower costs or something else, having a clear-cut end goal will help decision makers decipher the proper vision technology required to achieve success.

Understand the Application

VGR is a continuum, where the “right” solution is dependent on the specific task at hand. It is important for manufacturers to know every step of the application process, so that the right technology can be chosen. With multiple types of vision systems to choose from, knowing product specifications and standards, as well as the end result trying to be achieved, can help determine which type of system to use.

Consider the End User

Manufacturers should consider end customer product demands. Does the part need to be produced faster? Does the product quality need to be better to meet higher standards? There is much to weigh where end user requirements are concerned. Speaking with end customers and compiling a list of the ideal product specifications is highly recommended.

Monitor Trends

With hardware and software constantly improving, it is important to be aware of the diversity and types of applications happening in the industry and to keep track of what type of imaging tools are being used. As mentioned, the trend toward more integrated systems—where the camera, lighting and software are all combined and packaged together—is growing in popularity and helping early adopters of the technology gain a competitive advantage.

Learn More

A basic understanding of the vision tools available and their ability to positively impact a given application is needed. With multiple imaging systems to choose from, there are many different nuances to consider.

Partner Strategically

Once the previous steps have taken place, it is time to reach out to automation experts to create the “right” robotic solution. Robot OEMs have experienced application engineers that specialize in conceptualizing advanced robotic solutions that seamlessly integrate robots, peripherals and software, delivering the ideal solution for customers.

Recognition vision solutions can simplify the use of 3D vision in robotic guidance applications. The solutions can learn and identify many objects regardless of presentation within the visual field of the camera.

Again, there is much to learn and know about vision guided robotics before beginning the implementation process. Other items for decision makers to consider include:

Structured Environment vs. Random Environment

The biggest difference between 2D and 3D vison systems is how they work and handle the randomness of the objects that are presented. Terminology that will most likely arise in conversation with a strategic partner is that of a structured environment versus a random environment.

- Structured environment – where objects are positioned or stacked in an organized, predictable pattern, so they can be easily imaged and picked.

- Semi-structured environment – where parts are positioned with some organization and predictability to help aid imaging and picking.

- Random environment – where parts are in totally random positions, including different orientations, or are overlapping or entangled.

While one could assume that a more structured environment is better suited for a 2D vision system and that a random environment is more ideal for a 3D vision system, determining the proper system for use really depends on the application and what the customer wants.

Inspection vs. Robot Guidance

There are two key types of vision systems: inspection and robot guidance. While most applications seem to utilize systems that focus on robot guidance, there is still a great deal of interest for inspection.

Inspection

Inspection systems look to judge the quality or fitment of parts. For instance, does the part meet the standard? These systems are sometimes looked at as a “cost of doing business,” since their job is to discard parts that do not meet the standard. These types of systems are better suited than humans for doing repetitive tasks, and they are faster and more objective. Consider the following examples:

- Bottle inspection – on an assembly line, if there is sufficient liquid in the bottle, the vision system would detect it and pass the bottle for inspection. On the contrary, if there is not enough liquid in the bottle to meet the standard, the imaging system would detect the low level, and the bottle would be rejected.

- Oil filter inspection – with oil filter inspection, a camera can help determine if there are blockages in a hole, and the bad filters can be rejected.

Guidance

Guidance systems are used to mitigate the unknown or compensate for some level of randomness. Easily seen as a benefit, as they improve throughput and material handling capabilities, these types of systems locate the object and help to guide the robot to it. Consider the following example:

- Picking and Placing – a camera or cameras can detect objects that are advancing down a conveyor in a semi-structured fashion. The cameras determine the X, Y coordinates for the robot to pick the individual items and place them on the opposite conveyor.

- Positioning – if items (or even the robot) have the tendency to move, vision can help reorient everything and provide relative positioning to the robot to account for any change in position.

“On the Robot” Camera vs. “Off the Robot” Camera

One item for decision makers to consider is whether a camera should be on the robot or off the robot. While this is usually determined by the application, it is sometimes forced by the solution—as some 3D vision systems require an off the robot camera, but some 2D solutions can use either.

A growing trend is to have an “off the robot” camera. This makes cable management easier, provides a larger field of view and facilitates parallel operations. Conversely, “on the robot” cameras provide a smaller field of view and can adjust to different depth of field. Both solutions have their unique advantages, and each option should be considered in light of the application.

Other Offerings

Vision technology is constantly being updated, especially where speed and resolution are concerned, and cameras will always have varying levels of resolution and performance. That said, the key concept to remember is that the camera used for a given application greatly depends on the size of the part and, if applicable, the bin size. Pendant tools and macros can also help ease the development of applications and improve performance.

Conclusion

Inspection, material handling and picking are some of the most common applications for vision guided robotics. Vision systems can directly help save money and increase profitability by reducing defects, improving handling capabilities and increasing yields. While next-generation imaging systems are more capable than ever at providing the functionality needed to efficiently and economically fulfill unique application requirements, getting to the point of implementation can be overwhelming for some manufacturers. Following several simple steps and weighing all of the technological possibilities before partnering with a robotic OEM for VGR can help facilitate an easier implementation process. V&S