As the pendulum swings from past decades of customized PLM to a far more flexible, highly-configured version, organizations need to borrow a page from modern software development and embrace automated testing as part of routine deployment.

PLM, like most enterprise platforms, has evolved from a monolithic system with rigid controls and lengthy release cycles to a series of continuously-updated modules that can be configured in any number of different combinations. However, along with the flexibility to configure the system to meet the specific requirements of any particular business comes the reality that there are endless permutations of workflow, processes, and software settings that simply cannot be adequately tested and vetted by any single PLM vendor.

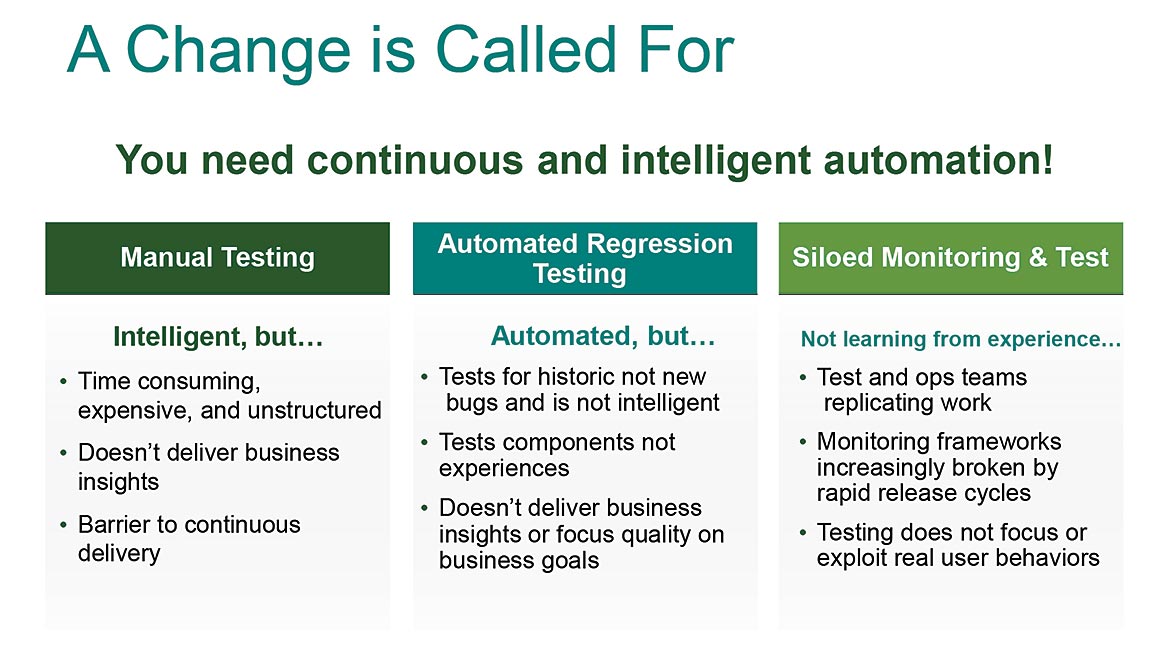

Image 1. A Change Is Called For Image Source: Razorleaf

Testing and validation (Image 1) have always been a massive component of enterprise PLM programs. However, the process has traditionally been highly labor-intensive, depending on human manpower to manually click through the full compendium of workflows and settings to ensure the system is working as intended. Not only is manual testing not scalable, it’s also a costly burden for most PLM teams. Individuals tasked with testing have to be fluent in the PLM software itself and must devote considerable time to the process—an inefficient use of coveted expertise and expensive software-development resources.

Some organizations have evolved their PLM test strategies with automated regression capabilities, which is certainly faster to execute and slightly more scalable than traditional manual test practices. Yet even this more modern approach has its share of challenges and limitations. Regression testing requires all test scripts to be built manually, so it only checks areas that have been specifically identified. At the same time, regression test strategies are designed to put individual components of the PLM software through its paces, not necessarily moderating the end-to-end user experience across the array of physical peripherals and devices employed in daily workflows. Since there are myriad pathways users can take to achieve the same functionality, there isn’t a robust and traceable audit trail to determine where a PLM implementation might veer off track.

Given the scope of the commitment, most organizations end up skimping on a testing plan. Yet without a sufficient strategy and formal exercises to test the end-to-end user experience, PLM deployment teams open themselves up to bugs and software glitches. These trouble spots can impede user experience, negatively impacting user acceptance. If employees start to work around the system to compensate, workflow enhancements and even entire PLM program success could fall by the wayside.

Streamlining The Process With Automated Testing

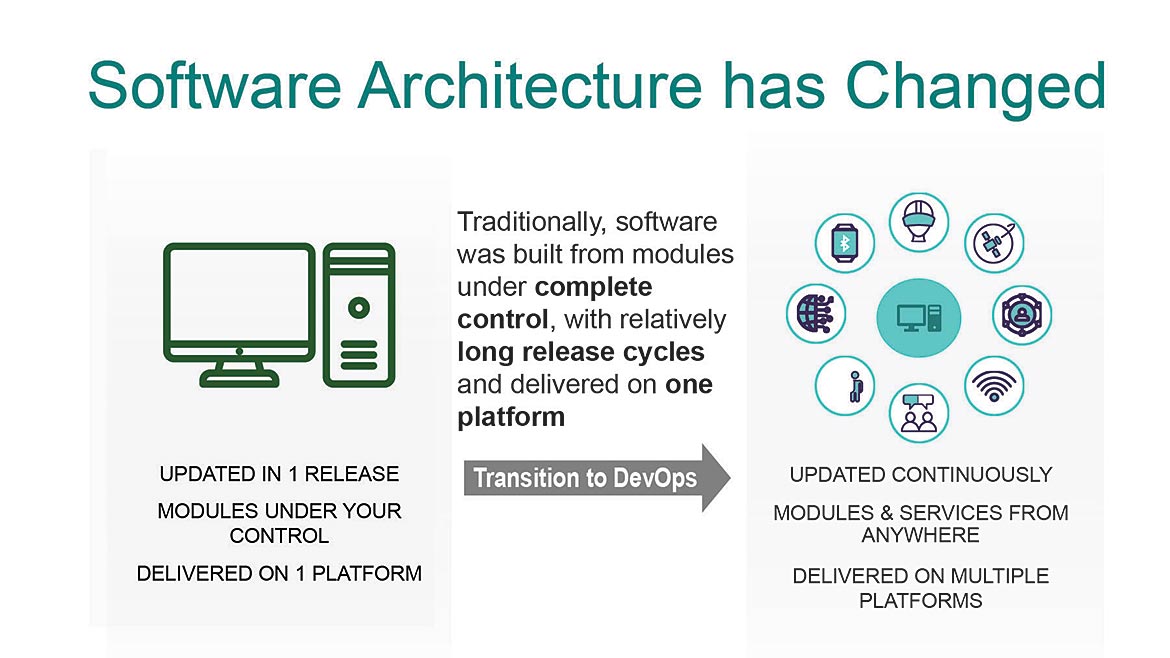

To overcome PLM testing challenges, IT organizations should borrow a page from the modern software development playbook (Image 2) and adopt such agile practices as DevOps, Continuous Integration and Continuous Deployment (CI/CD), and automated testing. Automated test practices ensure that testing scales exponentially to meet the needs of a PLM roll out, including the ability to test more functionalities and test more frequently. Use of automation means there’s less need for human intervention, which allows high-value IT and engineering talent to focus on more important endeavors.

Image 2. Software Architecture has Changed Image Source: Razorleaf

With automated test practices, organizations can shift testing to off-peak hours, including nights and weekends. This greatly reduces the traffic effects testing processes can have on systems and networks, and more importantly, minimizes any disruption to business operations. Moreover, having the structure of a repeatable, automated test plan provides a conduit for gathering valuable data that can be used to spot trends that help proactively identify and correct PLM system issues before they surface with users.

When evaluating automated test functionality, organizations should be on the lookout for some core capabilities to ensure a tool advances testing to the next level. In truth, automated test capabilities have been around for decades—for example, macro recorders that surfaced in the early days of Windows 95. But unlike those early offerings that were essentially click recorders, a modern automated test tool should incorporate intelligence that utilizes more of the lifecycle by auto-generating the test cases and test assets—a process that cuts down on costs and speeds up the entire process.

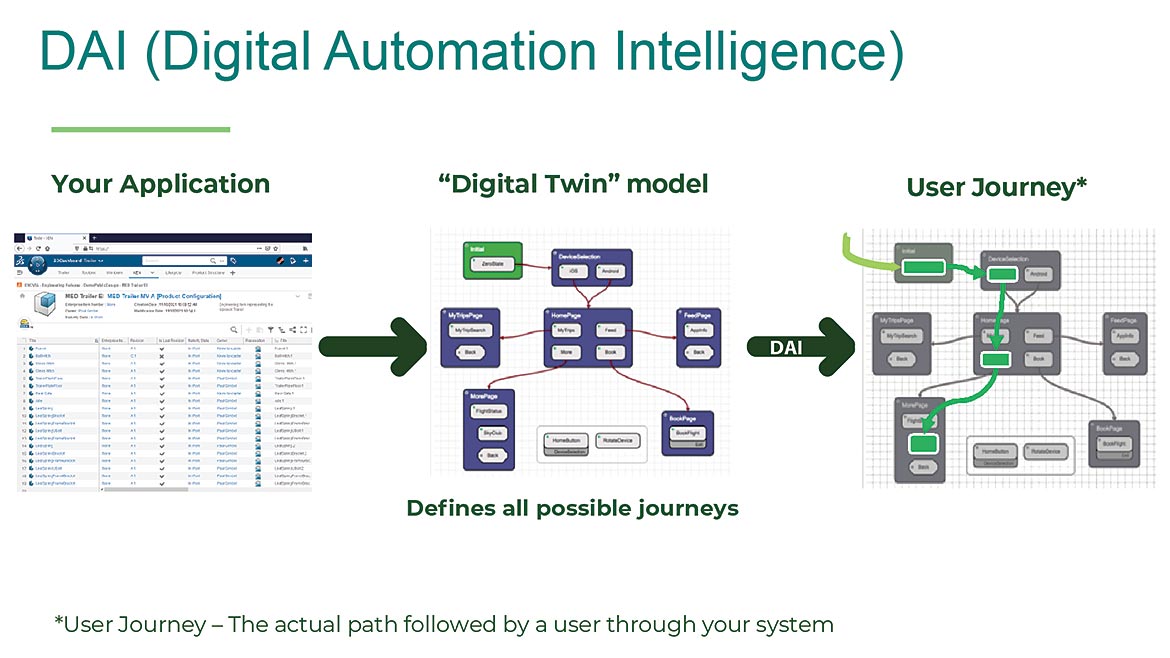

A more capable automated testing platform should also go beyond testing API results and have the intelligence to test the actual user experience across different web browsers, native apps, machine configurations, and any other systems and tools commonly employed by users. One way to achieve that goal is to model a digital twin of the PLM application (Image 3), leveraging built-in intelligence to understand and explore the workflows and processes to create a natural test path as opposed to being limited to one or two specific user journeys.

Image 3. Eggplant DAI (Digital Automation Intelligence) Image Source: Razorleaf

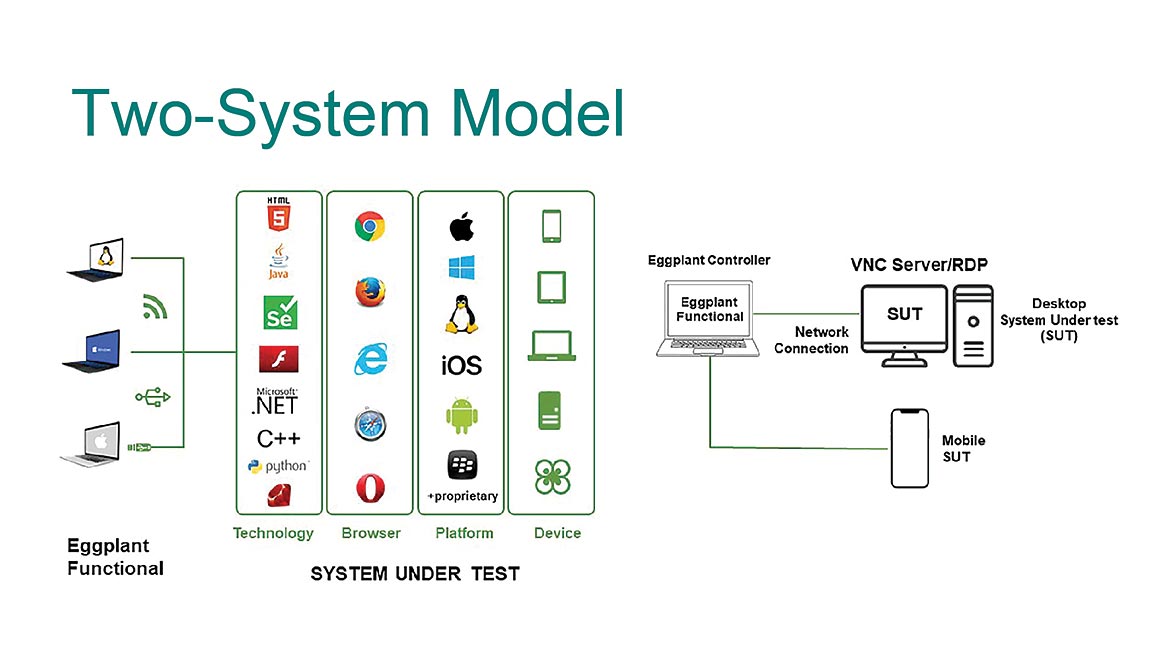

Another valuable feature of an automated PLM testing suite is support for a two-system model (Image 4). This approach separates the test tool from the system being tested, which adds to system usability and accuracy. The ability to separate the test tool from the PLM software being tested means there is no longer a requirement for a dedicated physical machine. This offers greater flexibility because you no longer need an expensive, dedicated resource for testing purposes, which is costly and labor intensive. It also opens the door to remote testing, which expands the test pool and promotes reuse so testing scripts don’t have to be rebuilt or redesigned every time.

Image 4. Eggplant Two-System Model Image Source: Razorleaf

A Best Practice Roadmap

Beyond a robust and intelligent automated test tool suite, organizations should also consider a number of steps to optimize test strategies and to build a culture that embraces testing instead of seeing the practice as a necessary evil. Some basic PLM testing best practices include:

Focus on the test plan. PLM systems are designed to appeal to a wide variety of companies in a broad range of industries, so the volume of functionality within the PLM platforms is absolutely massive. When designing an automated test plan, focus on the most important functionality for your processes and business. Design the plan to test important functionality more thoroughly rather than just testing more functionality.

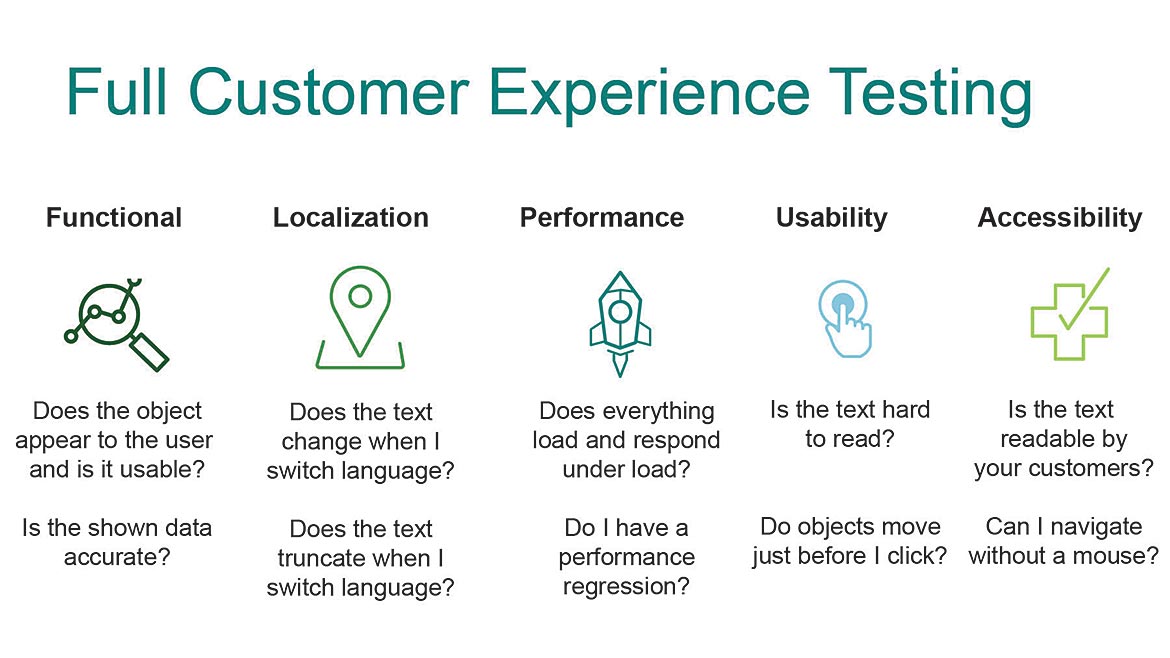

Consider the user journey. Probably the most profound lesson learned from implementing test automation for PLM is that it matters how users perform their tasks (Image 5). It’s critical to understand how users will navigate through the system not only to test those specific journeys, but also to understand what is important to them as users—specifically, what tasks they perform and what tools they leverage to achieve their daily goals.

Image 5. Full Customer Experience Testing Image Source: Razorleaf

Make the process painless. Testing has always been considered a last resort—something that everyone does, but nobody actually wants to do. Automation removes the tedium, making the task so painless that it becomes an inherent part of the deployment process, whether that’s making a tiny change to a configuration setting, initiating an update, or upgrading a more complex customization. Testing should be so natural that it becomes ingrained in the development culture, so that nobody considers rolling out a change to PLM without prior testing.

Align with an experienced partner. While the new generation of flexible, adaptable PLM is far easier to deploy than traditional offerings, implementation issues are still complex, and organizations can benefit from some handholding. An experienced vendor-neutral PLM software provider can expedite deployment, including the execution of test automation across a wide variety of PLM platforms.

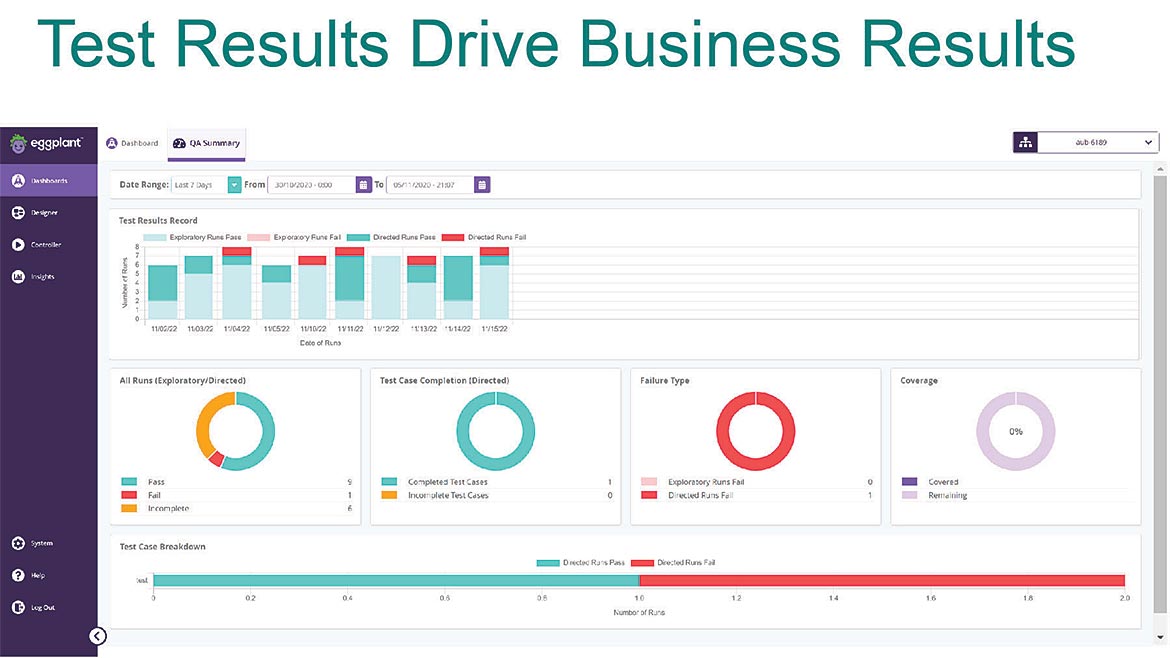

Image 6. Test Results Drive Business Results Image Source: Razorleaf

Organizations are getting quite adept at leveraging flexible and adaptable PLM to create efficiencies and drive innovation. By adopting a consistent, automated approach to testing PLM software, or any enterprise software being deployed or upgraded, organizations can dramatically improve their user experience, overcome adoption hurdles, eliminate “bugs” and finally realize those business goals and outcomes.