Smart 3D Robot Vision: The Science of Seamless System Integration

Learn how 3D smart sensors can be tightly integrated with robots to achieve a number of dynamic manufacturing processes.

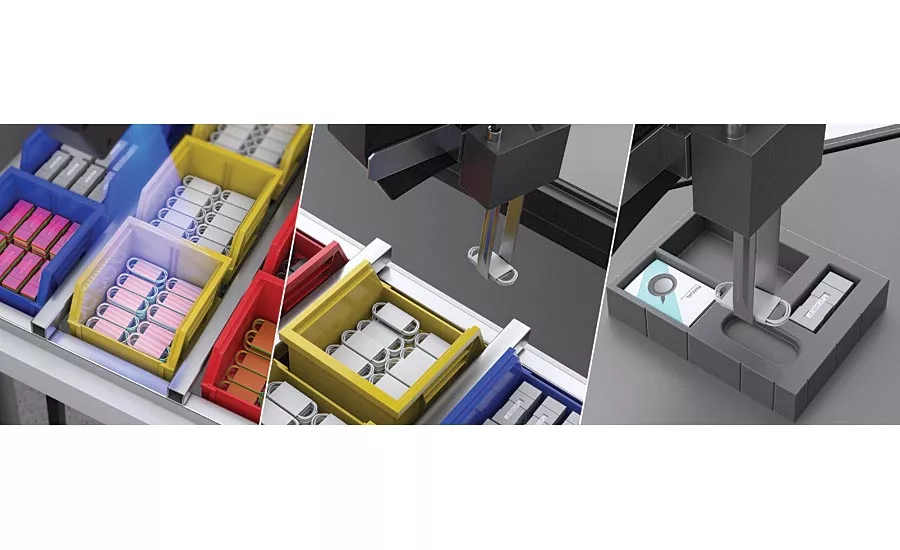

Smart 3D robot vision system for kitting (pick-and-place). Source: LMI Technologies

Integrating robots into a manufacturing line challenges process control engineers to rethink part flow and learn how both robot and 3D sensors can work together to achieve faster, more efficient production.

Manufacturing companies must automate their production and distribution systems to stay ahead of the curve, or they face being outpaced by competitors who successfully embrace these technologies to leverage lower cost, higher production output, with a more dynamic infrastructure to quickly respond to customer demand for lower volume, specialized products.

Today, the key drivers of these vision guided systems include:

- Need to reduce labor costs

- Need to reduce fixture and tooling cost

- Need to “see” objects in 3D and drive robots from blind movement to intelligent movement

This article looks at how 3D smart sensors can be tightly integrated with robots to achieve a number of dynamic manufacturing processes based on “smart” movement derived from 3D scan data.

Types of Robotic System Configuration

There are two common approaches to configure a 3D sensor with a robot: 1) mounted on frame, and 2) mounted on robot. Let’s look at the differences.

1. Mounted On Frame (Eye-to-Hand)

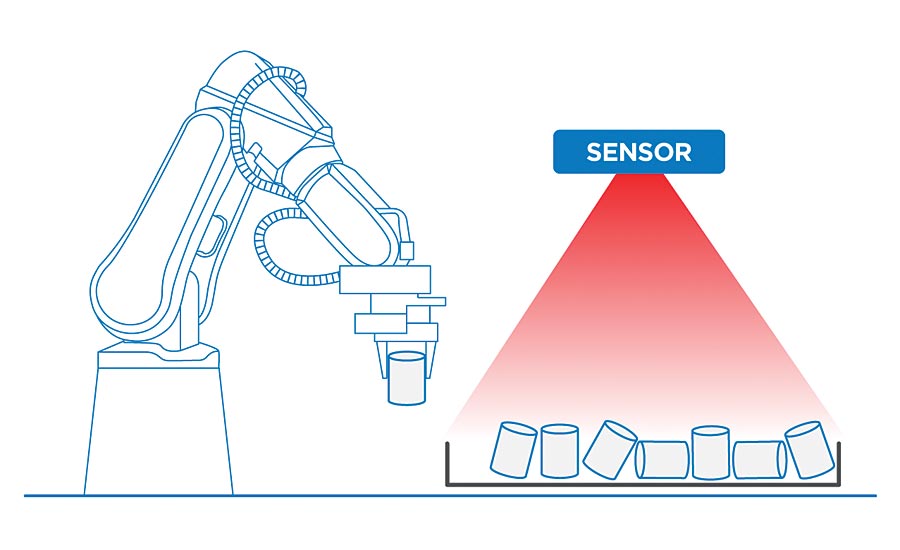

In frame-mounted systems, the sensor is in a fixed position separate from the robot. The sensor scans a working area to locate an object and communicates pose data to the robotic arm. Calibration is required to establish the relationship between the sensor coordinate system and the robot coordinate system (often referred to as “Eye-To-Hand” calibration) so objects identified in a 3D point cloud can be picked up by a robot.

A 3D smart sensor carries out the calibration, point cloud acquisition, part localization, and robot communication. The robot performs the path planning logic that moves the end-effector accurately and efficiently to pick up a part.

Frame-mounted sensor and robot arm used in bin picking application. Source: LMI Technologies

Robot-mounted sensor for scanning and inspection of large targets. Source: LMI Technologies

The frame-mounted approach is common in bin picking where the scan data is a point cloud containing many randomly stacked parts of the same object in a bin. Intelligent localization software processes the point cloud to identify the next part to pick (usually sitting on the top of the pile) and feed into a manufacturing process.

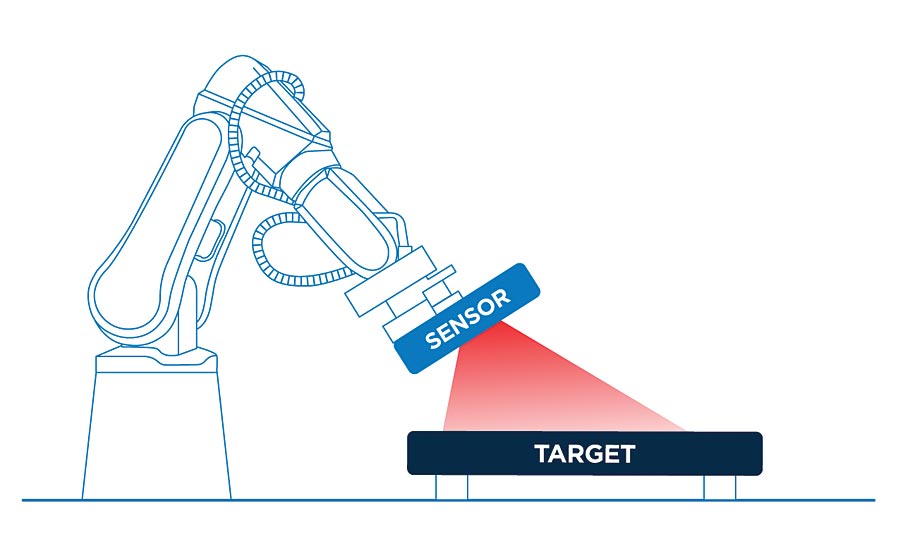

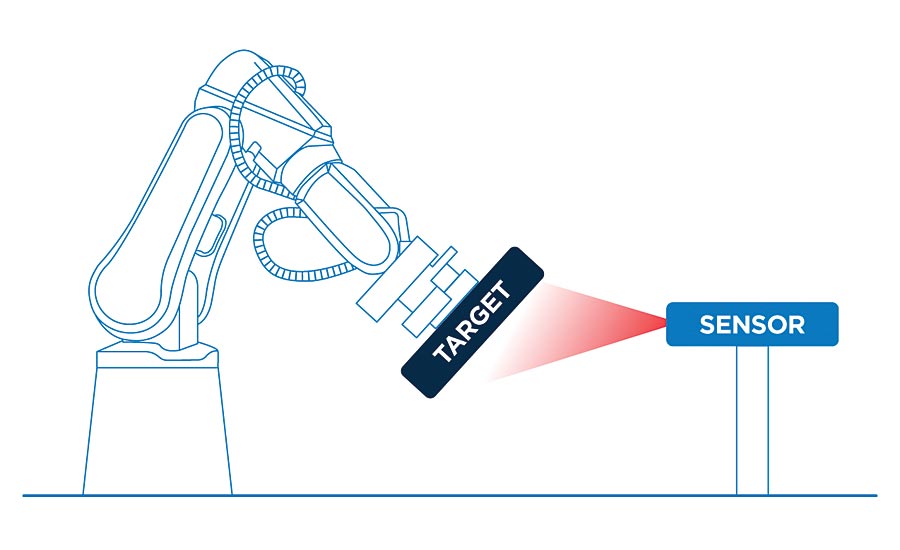

In addition to object detection for pick-and-place, the sensor can be used for measurement and quality inspection of the target where the robot presents the object for inspection to the sensor and a pass/fail decision is made.

Fixed-mount sensor used for dimensional measurement and quality inspection. Source: LMI Technologies

2. Mounted on Robot Arm (Eye-in-Hand)

In this configuration the sensor is mounted on the robot end-effector and guides the robot to perform real-time positioning and critical tasks such as weld seam tracking or assembly.

The calibration required for systems that have a sensor mounted on the robot arm is often referred to as “Eye-In-Hand” calibration. While ensuring accuracy, Eye-in-Hand systems are highly flexible and able to overcome the limitations of a fixed working environment and achieve inspection of large targets that have many occluded areas.

The Key Elements of Sensor-Robot Integration: “Non-Smart” vs. Smart Approaches

1. Communication. The first major advantage 3D smart sensors have over standard, “non-smart” sensors is that they communicate directly with the robot. Standard sensors usually communicate with the robot via a host PC, which increases latency as well as system cost and complexity of integration.

Smart 3D robot vision system for semi-structured bin picking. Source: LMI Technologies

2. Calibration. The objective of sensor-robot calibration is to establish a relationship between the coordinate system of the sensor and a robot coordinate system. This leads to a transformation that converts a part located in a 3D point cloud (sensor coordinates) to a pose that a robot can pick up with its motion/encoder sub-system (robot coordinates).

A calibration routine will typically require a short training setup where a known artifact is presented to the sensor (such as a ball bar) in several orientations. The resulting scans are analyzed to extract pose data and build a 6 DOF transformation that converts the ball bar position from a 3D scan into a corresponding robot position.

3. Measurement Algorithm Development. 3D smart sensors offer built-in measurement tools and control decision-making, which gives robotic systems the ability to measure and inspect target objects. “Non-smart” sensors cannot deliver these same inspection capabilities without costly third party software and an external PC.

Since you don’t have to write any robot programs or calibration routines, or add third party software or PCs, using 3D smart sensors lead to fast deployment of solutions. Any engineer can do it.

If you’re an advanced user who wants greater flexibility and control, you have the option of using software development kits to develop and embed your own custom tools onto the sensor—allowing you to introduce your own part localization or measurement algorithms.

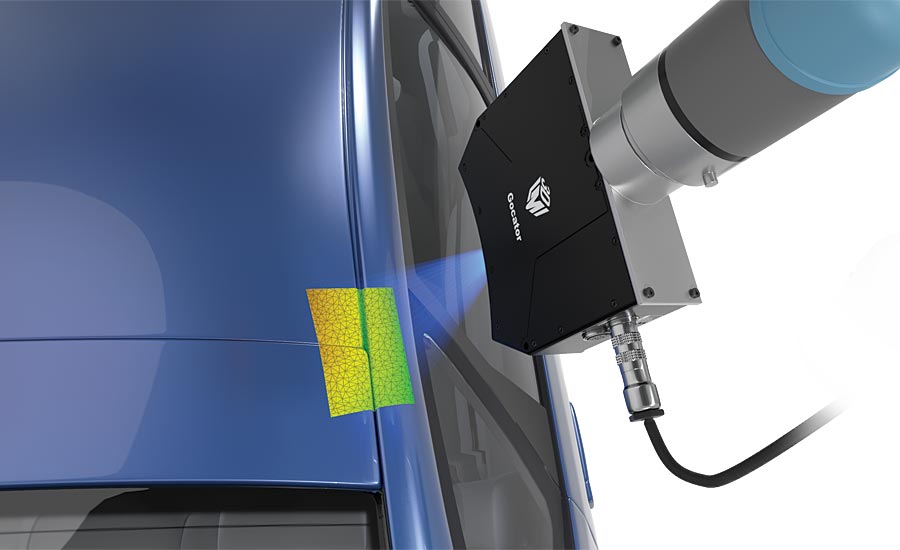

Smart 3D robot vision for automotive gap & flush inspection. Source: LMI Technologies

Benefits of Vision Guided Robotics (VGR) to Your Business

Normally, a robot moves blindly and repeatedly to a known position within its operating envelope. With smart 3D robot vision guidance, machine vision elevates the robot to perform dynamic movement based on what a sensor sees, and this leads to achieving greater value from the robot in a manufacturing process.

VGR is used across many industries, including packaging & logistics, automotive, pharmaceutical, medical, electronics, and food and beverage. Switching between products and batch runs is software controlled and very fast, with no mechanical adjustments.

Manufacturing Benefits of VGR:

- Extends a non-smart robot into smarter applications

- High residual system value, even if production is changed

- High machine efficiency, reliability, and flexibility

- Further reduces manual labor/work costs

- Example Industry Applications

The most common VGR application is pick-and-place, where a sensor is mounted over a work area in which the robot carries out pick and place movement (e.g., transferring parts from a conveyor to a box).

Another common VGR application is part inspection, where the manipulator on a robot moves a sensor to various features on a workpiece for inspection (e.g., gap & flush inspection on a car body, or hole and stud dimension tolerancing).

Finally, the most sophisticated application of VGR is where the manipulator on a robot picks up a “jig” that contains a number of sensors, and is programmed to pick up a workpiece and guide it for insertion into a larger assembly using sensor feedback (e.g., door panel or windshield insertion).

Conclusion

Automation of repetitive tasks (such as pick-and-place) delivers many benefits to your business, such as removing labor cost and increasing productivity. And now with easy robot integration provided by 3D smart sensors, you can get a complete vision-guided robotic solution up and running with minimal cost and development time, extending a robot’s functionality into a new set of sophisticated applications. V&S

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!